数据密集型应用系统设计

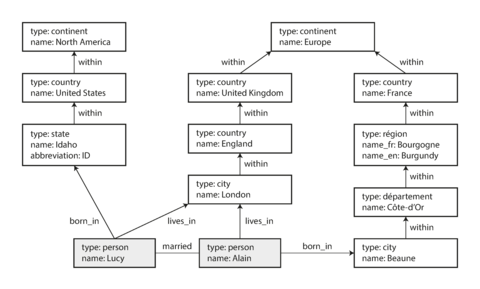

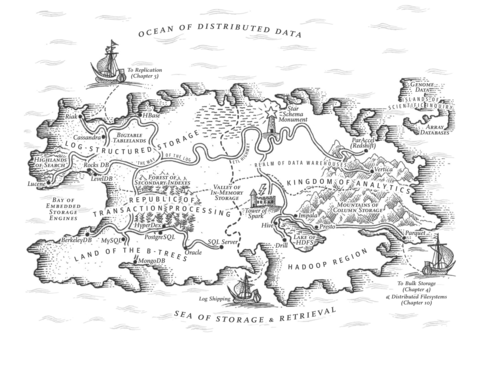

Designing Data-Intensive Applications

The Big Ideas Behind Reliable, Scalable, and Maintainable Systems

可靠性、可扩展性和可维护性系统之背后的重大思想

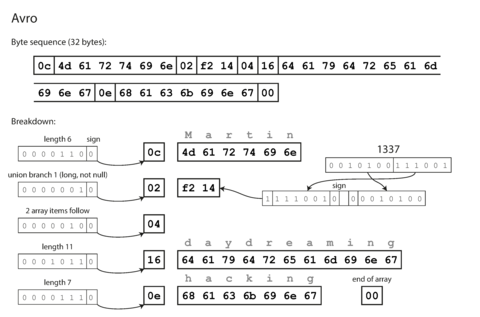

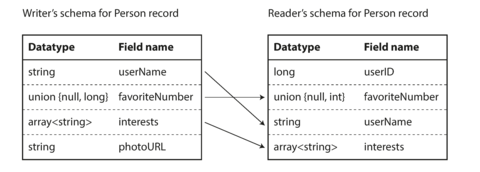

Martin Kleppmann

马丁·克莱普曼

Designing Data-Intensive Applications

by Martin Kleppmann

马丁·克莱普曼(Martin Kleppmann)

Copyright © 2017 Martin Kleppmann. All rights reserved.

版权所有 © 2017 Martin Kleppmann。保留所有权利。

Printed in the United States of America.

美国制造。

Published by O’Reilly Media, Inc. , 1005 Gravenstein Highway North, Sebastopol, CA 95472.

由O’Reilly媒体公司出版,位于加利福尼亚州塞巴斯托波尔市Gravenstein北路1005号。

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are also available for most titles ( http://oreilly.com/safari ). For more information, contact our corporate/institutional sales department: 800-998-9938 or [email protected] .

O’Reilly图书可用于教育、商业或销售促销用途。大多数书籍也可以在线购买(http://oreilly.com/safari)。如需更多信息,请联系我们的公司/机构销售部门:800-998-9938或[email protected]。

|

Editors: Ann Spencer and Marie Beaugureau 编辑:安·斯宾塞和玛丽·博戈尔 |

Indexer: Ellen Troutman-Zaig 索引员:艾伦·特劳特曼-扎伊格 |

|

Production Editor: Kristen Brown 生产编辑:Kristen Brown |

Interior Designer: David Futato 室内设计师:David Futato |

|

Copyeditor: Rachel Head 校对员:雷切尔·海德 |

Cover Designer: Karen Montgomery 封面设计师:凯伦·蒙哥马利 |

|

Proofreader: Amanda Kersey 校对员:阿曼达·柯西 |

Illustrator: Rebecca Demarest 插画师:丽贝卡·德马雷斯特 |

- March 2017: First Edition

Revision History for the First Edition

- 2017-03-01: First Release

See http://oreilly.com/catalog/errata.csp?isbn=9781449373320 for release details.

请参见 http://oreilly.com/catalog/errata.csp?isbn=9781449373320 以获取发布细节。

The O’Reilly logo is a registered trademark of O’Reilly Media, Inc. Designing Data-Intensive Applications , the cover image, and related trade dress are trademarks of O’Reilly Media, Inc.

O’Reilly徽标是O’Reilly Media, Inc.的注册商标。 设计数据密集型应用程序,封面图像和相关商标 是O’Reilly Media,Inc.的商标。

While the publisher and the author have used good faith efforts to ensure that the information and instructions contained in this work are accurate, the publisher and the author disclaim all responsibility for errors or omissions, including without limitation responsibility for damages resulting from the use of or reliance on this work. Use of the information and instructions contained in this work is at your own risk. If any code samples or other technology this work contains or describes is subject to open source licenses or the intellectual property rights of others, it is your responsibility to ensure that your use thereof complies with such licenses and/or rights.

尽管出版商和作者已经诚信努力确保本作品中包含的信息和指令准确无误,但出版商和作者不对错误或遗漏负责,包括但不限于因使用或依赖于本作品所导致的损害。使用本作品中包含的信息和指令需自行承担风险。如果本作品中包含或描述的任何代码示例或其他技术受开放源代码许可证或他人的知识产权的限制,则您有责任确保遵守此类许可证和/或权利。

978-1-449-37332-0

978-1-449-37332-0 的翻译为简体中文是:978-1-449-37332-0。

[LSI]

[LSI] - 逻辑符号互连技术

Dedication

Technology is a powerful force in our society. Data, software, and communication can be used for bad: to entrench unfair power structures, to undermine human rights, and to protect vested interests. But they can also be used for good: to make underrepresented people’s voices heard, to create opportunities for everyone, and to avert disasters. This book is dedicated to everyone working toward the good.

技术在我们社会中是一股强大的力量。数据、软件和通讯可以被用于恶意行为:巩固不公平的权力结构、削弱人权以及维护既得利益。但是,它们也可以被用于善行:让被忽视的人发出声音,为所有人创造机会,以及防止灾难。这本书致力于为所有致力于善行的人而写。

Computing is pop culture. […] Pop culture holds a disdain for history. Pop culture is all about identity and feeling like you’re participating. It has nothing to do with cooperation, the past or the future—it’s living in the present. I think the same is true of most people who write code for money. They have no idea where [their culture came from].

计算机是流行文化。流行文化对历史不屑一顾。流行文化关注身份认同和参与感,与合作、过去或未来毫不相关——它只存在于当下。我认为,大多数为赚钱而编写代码的人也是如此,他们不知道自己所处的文化从何而来。

Alan Kay , in interview with Dr Dobb’s Journal (2012)

艾伦·凯(Alan Kay), 在与《Dr Dobb’s Journal》(2012)的采访中表示:

Preface

If you have worked in software engineering in recent years, especially in server-side and backend systems, you have probably been bombarded with a plethora of buzzwords relating to storage and processing of data. NoSQL! Big Data! Web-scale! Sharding! Eventual consistency! ACID! CAP theorem! Cloud services! MapReduce! Real-time!

如果您最近在软件工程领域工作,尤其是在服务器端和后端系统方面,您可能已经遭受了大量与数据存储和处理有关的信息轰炸。NoSQL!大数据! Web规模!分片!最终一致性! ACID! CAP定理!云服务! MapReduce!实时!

In the last decade we have seen many interesting developments in databases, in distributed systems, and in the ways we build applications on top of them. There are various driving forces for these developments:

在过去的十年中,我们看到了许多有趣的数据库发展、分布式系统和构建在它们之上应用的方式。这些发展有不同的推动力。

-

Internet companies such as Google, Yahoo!, Amazon, Facebook, LinkedIn, Microsoft, and Twitter are handling huge volumes of data and traffic, forcing them to create new tools that enable them to efficiently handle such scale.

像谷歌、雅虎、亚马逊、Facebook、领英、微软和Twitter这样的互联网公司正在处理大量的数据和流量,这迫使它们创建新的工具,使它们能够高效地处理这样的规模。

-

Businesses need to be agile, test hypotheses cheaply, and respond quickly to new market insights by keeping development cycles short and data models flexible.

企业需要具有敏捷性,在廉价测试假设并快速响应新的市场洞察方面,通过保持短时间的开发周期和灵活的数据模型。

-

Free and open source software has become very successful and is now preferred to commercial or bespoke in-house software in many environments.

免费开源软件已经变得非常成功,并且在许多环境中比商业或定制内部软件更受欢迎。

-

CPU clock speeds are barely increasing, but multi-core processors are standard, and networks are getting faster. This means parallelism is only going to increase.

“CPU时钟速度几乎没有增加,但多核处理器已成为标准,网络速度越来越快。这意味着并行性只会增加。”

-

Even if you work on a small team, you can now build systems that are distributed across many machines and even multiple geographic regions, thanks to infrastructure as a service (IaaS) such as Amazon Web Services.

即使您在小团队工作,也可以借助基础设施即服务(IaaS)(如Amazon Web Services)构建跨多台机器甚至多个地理区域的系统。

-

Many services are now expected to be highly available; extended downtime due to outages or maintenance is becoming increasingly unacceptable.

许多服务现在都被期望具有高度可用性;由于故障或维护而导致的延长停机时间越来越不可接受。

Data-intensive applications are pushing the boundaries of what is possible by making use of these technological developments. We call an application data-intensive if data is its primary challenge—the quantity of data, the complexity of data, or the speed at which it is changing—as opposed to compute-intensive , where CPU cycles are the bottleneck.

数据密集型应用程序正在利用技术发展推动着可能性的边界。如果数据是主要挑战,如数据量、数据复杂性或数据变化速度,我们将其称为数据密集型应用,而不是计算密集型应用,其中CPU周期是瓶颈。

The tools and technologies that help data-intensive applications store and process data have been rapidly adapting to these changes. New types of database systems (“NoSQL”) have been getting lots of attention, but message queues, caches, search indexes, frameworks for batch and stream processing, and related technologies are very important too. Many applications use some combination of these.

帮助数据密集型应用程序存储和处理数据的工具和技术已经迅速适应这些变化。新型的数据库系统("NoSQL")受到了很多关注,但是消息队列、缓存、搜索索引、批处理和流处理的框架以及相关技术也非常重要。许多应用程序使用这些技术的组合。

The buzzwords that fill this space are a sign of enthusiasm for the new possibilities, which is a great thing. However, as software engineers and architects, we also need to have a technically accurate and precise understanding of the various technologies and their trade-offs if we want to build good applications. For that understanding, we have to dig deeper than buzzwords.

这个空间充满了时髦词汇,显示了对新可能性的热情,这是一件好事。然而,作为软件工程师和架构师,如果我们想要构建出优秀的应用程序,我们还需要对各种技术及其取舍有准确而精确的理解。为了获得这种理解,我们必须比流行语更深入地挖掘。

Fortunately, behind the rapid changes in technology, there are enduring principles that remain true, no matter which version of a particular tool you are using. If you understand those principles, you’re in a position to see where each tool fits in, how to make good use of it, and how to avoid its pitfalls. That’s where this book comes in.

幸运的是,在技术的迅速变化背后,存在一些不变的原则,无论你使用哪个版本的特定工具都是如此。如果你理解这些原则,就能够看到每个工具的适用范围,如何有效地利用它以及如何避免它的陷阱。这就是本书的作用。

The goal of this book is to help you navigate the diverse and fast-changing landscape of technologies for processing and storing data. This book is not a tutorial for one particular tool, nor is it a textbook full of dry theory. Instead, we will look at examples of successful data systems: technologies that form the foundation of many popular applications and that have to meet scalability, performance, and reliability requirements in production every day.

这本书的目的是帮助您适应不断变化的数据处理和存储技术领域。本书不是针对某个特定工具的教程,也不是一本充满干燥理论的教科书。相反,我们将看一些成功的数据系统实例:这些技术是许多流行应用程序的基础,每天都需要在生产中满足可扩展性、性能和可靠性需求。

We will dig into the internals of those systems, tease apart their key algorithms, discuss their principles and the trade-offs they have to make. On this journey, we will try to find useful ways of thinking about data systems—not just how they work, but also why they work that way, and what questions we need to ask.

我们将深入研究这些系统的内部结构,分离出它们的关键算法,讨论它们的原理和需要做出的权衡。在这个过程中,我们将尝试找到有用的关于数据系统的思考方式——不仅要了解它们如何工作,还要了解为什么以及我们需要问什么问题。

After reading this book, you will be in a great position to decide which kind of technology is appropriate for which purpose, and understand how tools can be combined to form the foundation of a good application architecture. You won’t be ready to build your own database storage engine from scratch, but fortunately that is rarely necessary. You will, however, develop a good intuition for what your systems are doing under the hood so that you can reason about their behavior, make good design decisions, and track down any problems that may arise.

阅读完本书后,您将能够准确判断哪种技术适用于哪种目的,并了解如何将不同工具结合起来构建一个良好的应用架构基础。虽然您可能没有能力从零开始构建自己的数据库存储引擎,但幸运的是,这很少有必要。但是,您将能够对系统运行情况有良好的直觉,从而能够推理其行为,做出良好的设计决策,并找出可能出现的任何问题。

Who Should Read This Book?

If you develop applications that have some kind of server/backend for storing or processing data, and your applications use the internet (e.g., web applications, mobile apps, or internet-connected sensors), then this book is for you.

如果您开发的应用程序有一些服务器/后端来存储或处理数据,并且您的应用程序使用互联网(例如网络应用程序、移动应用程序或连接到互联网的传感器),那么这本书适合您。

This book is for software engineers, software architects, and technical managers who love to code. It is especially relevant if you need to make decisions about the architecture of the systems you work on—for example, if you need to choose tools for solving a given problem and figure out how best to apply them. But even if you have no choice over your tools, this book will help you better understand their strengths and weaknesses.

这本书适合软件工程师、软件架构师和技术经理,他们热爱编程。 如果您需要对系统架构做出决策,例如选择解决特定问题的工具以及最佳应用它们,这本书尤为相关。但即使您对工具没有选择权,这本书也可以帮助您更好地了解它们的优势和劣势。

You should have some experience building web-based applications or network services, and you should be familiar with relational databases and SQL. Any non-relational databases and other data-related tools you know are a bonus, but not required. A general understanding of common network protocols like TCP and HTTP is helpful. Your choice of programming language or framework makes no difference for this book.

你应该拥有一定的构建基于网络的应用程序或网络服务的经验,并且应该熟悉关系型数据库和SQL。你所了解的任何非关系型数据库和其他数据相关工具都是bonus,但不是必需的。对于像TCP和HTTP这样的常见网络协议的基本理解是有帮助的。在本书中,你选择的编程语言或框架没有任何区别。

If any of the following are true for you, you’ll find this book valuable:

如果以下情况适用于您,您会发现这本书非常有价值:

-

You want to learn how to make data systems scalable, for example, to support web or mobile apps with millions of users.

你想学习如何使数据系统可扩展,例如,支持拥有数百万用户的网站或移动应用程序。

-

You need to make applications highly available (minimizing downtime) and operationally robust.

您需要使应用程序高度可用(最小化停机时间)和操作上健壮。

-

You are looking for ways of making systems easier to maintain in the long run, even as they grow and as requirements and technologies change.

您正在寻找使系统在长期发展和需求和技术变化时更易于维护的方法。

-

You have a natural curiosity for the way things work and want to know what goes on inside major websites and online services. This book breaks down the internals of various databases and data processing systems, and it’s great fun to explore the bright thinking that went into their design.

你对事物的运作方式有天生的好奇心,想要了解主要网站和在线服务内部的工作原理。这本书剖析了各种数据库和数据处理系统的内部结构,探索其设计背后的精妙思考过程,非常有趣。

Sometimes, when discussing scalable data systems, people make comments along the lines of, “You’re not Google or Amazon. Stop worrying about scale and just use a relational database.” There is truth in that statement: building for scale that you don’t need is wasted effort and may lock you into an inflexible design. In effect, it is a form of premature optimization. However, it’s also important to choose the right tool for the job, and different technologies each have their own strengths and weaknesses. As we shall see, relational databases are important but not the final word on dealing with data.

有时候,当讨论可扩展数据系统时,人们会做出类似的评论:"你不是谷歌或亚马逊。别担心规模,只需使用关系数据库"。 这种说法确实有一定道理:为未来的规模而建造是浪费的,可能会使设计变得不灵活,实际上是一种过早的优化。 然而,选择正确的工具也同样重要,不同的技术各有其优点和缺点。正如我们所看到的,关系数据库很重要,但并不是解决处理数据的最终方案。

Scope of This Book

This book does not attempt to give detailed instructions on how to install or use specific software packages or APIs, since there is already plenty of documentation for those things. Instead we discuss the various principles and trade-offs that are fundamental to data systems, and we explore the different design decisions taken by different products.

本书无意提供如何安装或使用特定软件包或API的详细说明,因为这些内容已经有了大量的文档。相反,我们讨论了数据系统中基本的原则和权衡,探讨了不同产品所采取的不同设计决策。

In the ebook editions we have included links to the full text of online resources. All links were verified at the time of publication, but unfortunately links tend to break frequently due to the nature of the web. If you come across a broken link, or if you are reading a print copy of this book, you can look up references using a search engine. For academic papers, you can search for the title in Google Scholar to find open-access PDF files. Alternatively, you can find all of the references at https://github.com/ept/ddia-references , where we maintain up-to-date links.

在电子书版本中,我们已经包含了指向在线资源全文的链接。所有链接在出版时都经过验证,但不幸的是,由于网络的本质,链接容易中断。如果您遇到破损的链接,或者正在阅读本书的印刷版,则可以使用搜索引擎查找参考资料。对于学术论文,您可以在Google学术中搜索标题以找到开放获取的PDF文件。或者,您可以在https://github.com/ept/ddia-references找到所有参考资料的最新链接。

We look primarily at the architecture of data systems and the ways they are integrated into data-intensive applications. This book doesn’t have space to cover deployment, operations, security, management, and other areas—those are complex and important topics, and we wouldn’t do them justice by making them superficial side notes in this book. They deserve books of their own.

我们主要关注数据系统的架构及其集成进数据密集型应用程序中的方式。本书不涉及部署,运营,安全,管理和其他领域 - 这些都是复杂且重要的主题,我们不会在本书中简单地点到它们。它们都值得有一本专门的书来讲述它们。

Many of the technologies described in this book fall within the realm of the Big Data buzzword. However, the term “Big Data” is so overused and underdefined that it is not useful in a serious engineering discussion. This book uses less ambiguous terms, such as single-node versus distributed systems, or online/interactive versus offline/batch processing systems.

本书描述的许多技术都属于Big Data热词的范畴。然而,“Big Data”这个术语已被过度使用和缺乏定义,因此在严肃的工程讨论中并不有用。本书使用较少含糊的术语,如单节点与分布式系统,或在线/交互式与离线/批处理系统。

This book has a bias toward free and open source software (FOSS), because reading, modifying, and executing source code is a great way to understand how something works in detail. Open platforms also reduce the risk of vendor lock-in. However, where appropriate, we also discuss proprietary software (closed-source software, software as a service, or companies’ in-house software that is only described in literature but not released publicly).

这本书有偏向于自由和开源软件(FOSS),因为阅读、修改和执行源代码是了解事物详细工作原理的好方法。开放的平台也可以降低厂商锁定的风险。然而,在适当的情况下,我们也会讨论专有软件(闭源软件、软件即服务或公司内部的软件只在文献中描述但未公开发布)。

Outline of This Book

This book is arranged into three parts:

这本书分为三个部分:

-

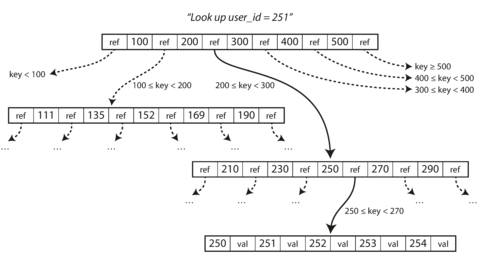

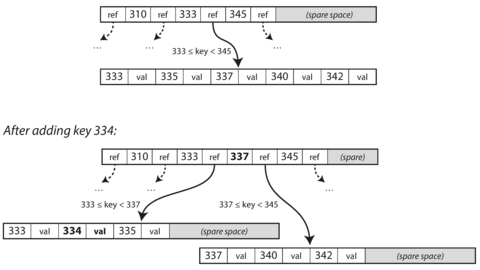

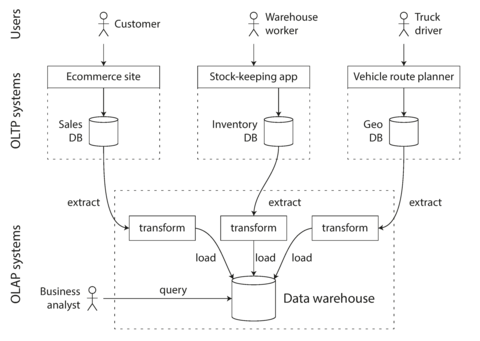

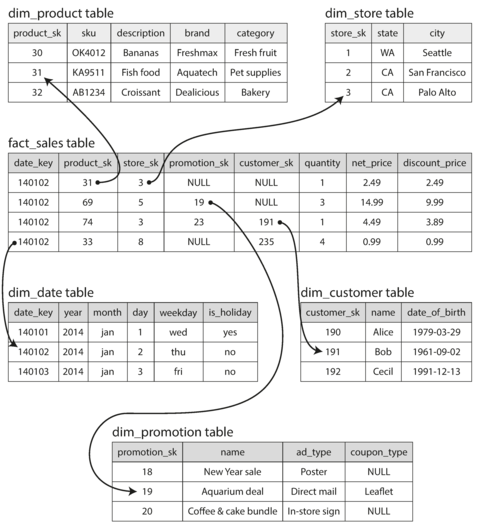

In Part I , we discuss the fundamental ideas that underpin the design of data-intensive applications. We start in Chapter 1 by discussing what we’re actually trying to achieve: reliability, scalability, and maintainability; how we need to think about them; and how we can achieve them. In Chapter 2 we compare several different data models and query languages, and see how they are appropriate to different situations. In Chapter 3 we talk about storage engines: how databases arrange data on disk so that we can find it again efficiently. Chapter 4 turns to formats for data encoding (serialization) and evolution of schemas over time.

在第一部分中,我们讨论了设计数据密集型应用程序所依托的基本思想。我们从第1章开始讨论我们实际要实现的目标:可靠性、可扩展性和可维护性;我们需要如何思考它们以及如何实现它们。在第2章中,我们比较了几种不同的数据模型和查询语言,并看到它们适用于不同的情况。在第3章中,我们讨论了存储引擎:数据库如何在磁盘上安排数据以便我们可以高效地找到它。第4章则转向数据编码格式(序列化)和模式随时间演变的格式。

-

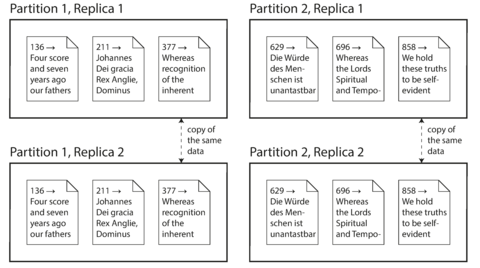

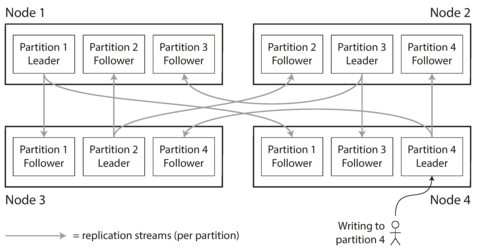

In Part II , we move from data stored on one machine to data that is distributed across multiple machines. This is often necessary for scalability, but brings with it a variety of unique challenges. We first discuss replication ( Chapter 5 ), partitioning/sharding ( Chapter 6 ), and transactions ( Chapter 7 ). We then go into more detail on the problems with distributed systems ( Chapter 8 ) and what it means to achieve consistency and consensus in a distributed system ( Chapter 9 ).

在第二部分,我们从存储于单独设备的数据转移到分布在多台设备上的数据。这通常是为了可伸缩性,但也带来了许多独特挑战。我们首先讨论复制(第五章),分区/分片(第六章)和事务(第七章)。然后,我们更详细地讨论分布式系统的问题(第八章),以及在分布式系统中实现一致性和共识的意义(第九章)。

-

In Part III , we discuss systems that derive some datasets from other datasets. Derived data often occurs in heterogeneous systems: when there is no one database that can do everything well, applications need to integrate several different databases, caches, indexes, and so on. In Chapter 10 we start with a batch processing approach to derived data, and we build upon it with stream processing in Chapter 11 . Finally, in Chapter 12 we put everything together and discuss approaches for building reliable, scalable, and maintainable applications in the future.

第三部分中,我们讨论从其他数据集派生一些数据集的系统。派生数据通常出现在异构系统中:当没有一个数据库可以很好地完成所有任务时,应用程序需要集成几个不同的数据库、缓存、索引等等。在第10章中,我们从批处理方法开始处理派生数据,并在第11章中再次加以扩展处理。最后,在第12章中,我们将所有内容汇总,并讨论未来构建可靠、可扩展和可维护应用程序的方法。

References and Further Reading

Most of what we discuss in this book has already been said elsewhere in some form or another—in conference presentations, research papers, blog posts, code, bug trackers, mailing lists, and engineering folklore. This book summarizes the most important ideas from many different sources, and it includes pointers to the original literature throughout the text. The references at the end of each chapter are a great resource if you want to explore an area in more depth, and most of them are freely available online.

本书讨论的大部分内容在其他地方以某种形式或另一种方式已经过提及—在会议演示、研究论文、博客文章、代码、缺陷跟踪器、邮件列表和工程传说中。本书总结了许多不同来源的最重要的思想,且在整个文本中都包含有指向原始文献的指针。每章结尾的参考文献是深入探索一个区域的极佳资源,其中大多数免费在网上可获取。

O’Reilly Safari

Note

Safari (formerly Safari Books Online) is a membership-based training and reference platform for enterprise, government, educators, and individuals.

Safari(原名Safari Books Online)是一个面向企业、政府、教育机构和个人的基于会员制的培训和参考平台。

Members have access to thousands of books, training videos, Learning Paths, interactive tutorials, and curated playlists from over 250 publishers, including O’Reilly Media, Harvard Business Review, Prentice Hall Professional, Addison-Wesley Professional, Microsoft Press, Sams, Que, Peachpit Press, Adobe, Focal Press, Cisco Press, John Wiley & Sons, Syngress, Morgan Kaufmann, IBM Redbooks, Packt, Adobe Press, FT Press, Apress, Manning, New Riders, McGraw-Hill, Jones & Bartlett, and Course Technology, among others.

会员可访问来自250多个出版商的数千本图书、培训视频、学习路径、交互式教程和策划播放列表,包括O'Reilly Media、哈佛商业评论、Prentice Hall Professional、Addison-Wesley Professional、Microsoft Press、Sams、Que、Peachpit Press、Adobe、Focal Press、Cisco Press、John Wiley & Sons、Syngress、Morgan Kaufmann、IBM Redbooks、Packt、Adobe Press、FT Press、Apress、Manning、New Riders、McGraw-Hill、Jones&Bartlett以及Course Technology等。

For more information, please visit http://oreilly.com/safari .

请访问http://oreilly.com/safari获取更多信息。

How to Contact Us

Please address comments and questions concerning this book to the publisher:

请将有关此书的评论和问题发送给出版社:

- O’Reilly Media, Inc.

- 1005 Gravenstein Highway North

- Sebastopol, CA 95472

- 800-998-9938 (in the United States or Canada)

- 707-829-0515 (international or local)

- 707-829-0104 (fax)

We have a web page for this book, where we list errata, examples, and any additional information. You can access this page at http://bit.ly/designing-data-intensive-apps .

我们有一本书的网页,列出勘误、例子和其他信息。您可以访问 http://bit.ly/designing-data-intensive-apps 查看此页。

To comment or ask technical questions about this book, send email to [email protected] .

如需对本书进行评论或提出技术问题,请发送电子邮件至[email protected]。

For more information about our books, courses, conferences, and news, see our website at http://www.oreilly.com .

有关我们的图书、课程、会议和新闻的更多信息,请访问我们的网站http://www.oreilly.com。

Find us on Facebook: http://facebook.com/oreilly

在Facebook上关注我们:http://facebook.com/oreilly

Follow us on Twitter: http://twitter.com/oreillymedia

关注我们的Twitter账户:http://twitter.com/oreillymedia。

Watch us on YouTube: http://www.youtube.com/oreillymedia

在YouTube上观看我们:http://www.youtube.com/oreillymedia。

Acknowledgments

This book is an amalgamation and systematization of a large number of other people’s ideas and knowledge, combining experience from both academic research and industrial practice. In computing we tend to be attracted to things that are new and shiny, but I think we have a huge amount to learn from things that have been done before. This book has over 800 references to articles, blog posts, talks, documentation, and more, and they have been an invaluable learning resource for me. I am very grateful to the authors of this material for sharing their knowledge.

这本书是对大量其他人的思想和知识进行的汇编和系统化,结合了学术研究和工业实践的经验。在计算领域,我们往往被新奇的东西所吸引,但我认为我们有很多东西可以从以前的事情中学习。这本书引用了800多篇文章、博客文章、讲话和文档等,它们对我来说是一种宝贵的学习资源。我非常感谢这些材料的作者分享他们的知识。

I have also learned a lot from personal conversations, thanks to a large number of people who have taken the time to discuss ideas or patiently explain things to me. In particular, I would like to thank Joe Adler, Ross Anderson, Peter Bailis, Márton Balassi, Alastair Beresford, Mark Callaghan, Mat Clayton, Patrick Collison, Sean Cribbs, Shirshanka Das, Niklas Ekström, Stephan Ewen, Alan Fekete, Gyula Fóra, Camille Fournier, Andres Freund, John Garbutt, Seth Gilbert, Tom Haggett, Pat Helland, Joe Hellerstein, Jakob Homan, Heidi Howard, John Hugg, Julian Hyde, Conrad Irwin, Evan Jones, Flavio Junqueira, Jessica Kerr, Kyle Kingsbury, Jay Kreps, Carl Lerche, Nicolas Liochon, Steve Loughran, Lee Mallabone, Nathan Marz, Caitie McCaffrey, Josie McLellan, Christopher Meiklejohn, Ian Meyers, Neha Narkhede, Neha Narula, Cathy O’Neil, Onora O’Neill, Ludovic Orban, Zoran Perkov, Julia Powles, Chris Riccomini, Henry Robinson, David Rosenthal, Jennifer Rullmann, Matthew Sackman, Martin Scholl, Amit Sela, Gwen Shapira, Greg Spurrier, Sam Stokes, Ben Stopford, Tom Stuart, Diana Vasile, Rahul Vohra, Pete Warden, and Brett Wooldridge.

因为许多人抽出时间与我探讨问题或耐心向我解释事物,我也从个人交流中学到了很多。特别感谢以下人士: Joe Adler, Ross Anderson, Peter Bailis, Márton Balassi, Alastair Beresford, Mark Callaghan, Mat Clayton, Patrick Collison, Sean Cribbs, Shirshanka Das, Niklas Ekström, Stephan Ewen, Alan Fekete, Gyula Fóra, Camille Fournier, Andres Freund, John Garbutt, Seth Gilbert, Tom Haggett, Pat Helland, Joe Hellerstein, Jakob Homan, Heidi Howard, John Hugg, Julian Hyde, Conrad Irwin, Evan Jones, Flavio Junqueira, Jessica Kerr, Kyle Kingsbury, Jay Kreps, Carl Lerche, Nicolas Liochon, Steve Loughran, Lee Mallabone, Nathan Marz, Caitie McCaffrey, Josie McLellan, Christopher Meiklejohn, Ian Meyers, Neha Narkhede, Neha Narula, Cathy O’Neil, Onora O’Neill, Ludovic Orban, Zoran Perkov, Julia Powles, Chris Riccomini, Henry Robinson, David Rosenthal, Jennifer Rullmann, Matthew Sackman, Martin Scholl, Amit Sela, Gwen Shapira, Greg Spurrier, Sam Stokes, Ben Stopford, Tom Stuart, Diana Vasile, Rahul Vohra, Pete Warden, 和 Brett Wooldridge.

Several more people have been invaluable to the writing of this book by reviewing drafts and providing feedback. For these contributions I am particularly indebted to Raul Agepati, Tyler Akidau, Mattias Andersson, Sasha Baranov, Veena Basavaraj, David Beyer, Jim Brikman, Paul Carey, Raul Castro Fernandez, Joseph Chow, Derek Elkins, Sam Elliott, Alexander Gallego, Mark Grover, Stu Halloway, Heidi Howard, Nicola Kleppmann, Stefan Kruppa, Bjorn Madsen, Sander Mak, Stefan Podkowinski, Phil Potter, Hamid Ramazani, Sam Stokes, and Ben Summers. Of course, I take all responsibility for any remaining errors or unpalatable opinions in this book.

这本书的写作离不开以下这些人的宝贵意见和反馈。我深表感激:Raul Agepati、Tyler Akidau、Mattias Andersson、Sasha Baranov、Veena Basavaraj、David Beyer、Jim Brikman、Paul Carey、Raul Castro Fernandez、Joseph Chow、Derek Elkins、Sam Elliott、Alexander Gallego、Mark Grover、Stu Halloway、Heidi Howard、Nicola Kleppmann、Stefan Kruppa、Bjorn Madsen、Sander Mak、Stefan Podkowinski、Phil Potter、Hamid Ramazani、Sam Stokes 和 Ben Summers。当然,本书中任何剩余的错误或不受欢迎的观点都由作者自负。

For helping this book become real, and for their patience with my slow writing and unusual requests, I am grateful to my editors Marie Beaugureau, Mike Loukides, Ann Spencer, and all the team at O’Reilly. For helping find the right words, I thank Rachel Head. For giving me the time and freedom to write in spite of other work commitments, I thank Alastair Beresford, Susan Goodhue, Neha Narkhede, and Kevin Scott.

感谢我的编辑玛丽·博戈罗、迈克·卢基德斯、安·斯宾塞以及O’Reilly的整个团队,他们帮助这本书成为现实,并耐心应对我的缓慢写作和不寻常的请求。感谢Rachel Head帮助我找到合适的词语。感谢Alastair Beresford、Susan Goodhue、Neha Narkhede和Kevin Scott给予我时间和自由,让我在其他工作任务的影响下完成写作。

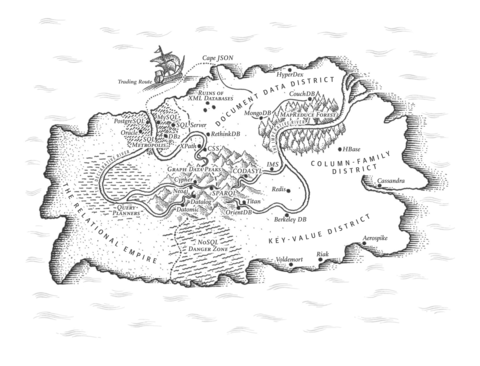

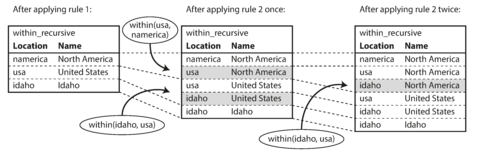

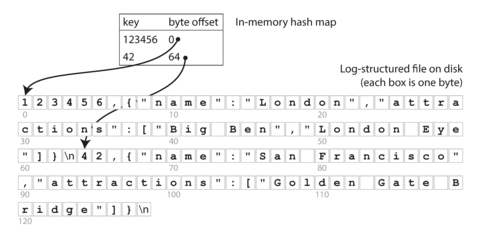

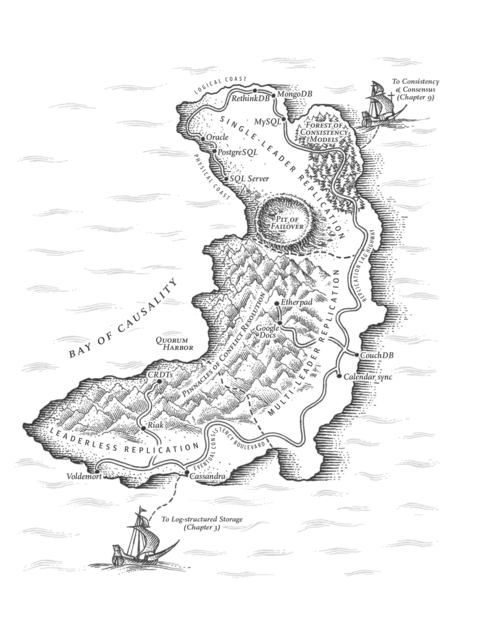

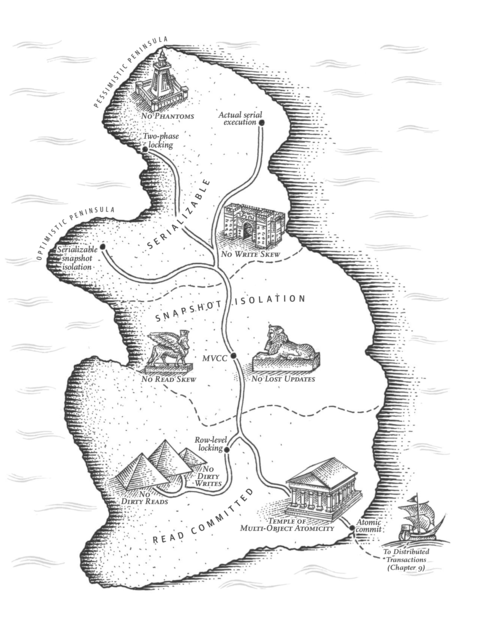

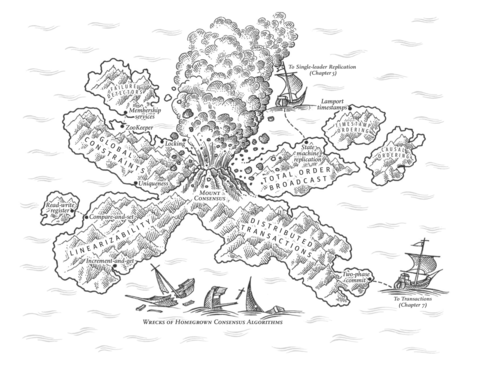

Very special thanks are due to Shabbir Diwan and Edie Freedman, who illustrated with great care the maps that accompany the chapters. It’s wonderful that they took on the unconventional idea of creating maps, and made them so beautiful and compelling.

非常特别感谢沙比尔迪旺和埃迪弗里德曼,他们非常用心地插绘了随章节附带的地图。他们接受了非传统的创意,制作的地图美轮美奂,极富吸引力,实在太棒了!

Finally, my love goes to my family and friends, without whom I would not have been able to get through this writing process that has taken almost four years. You’re the best.

最后,我的爱送给我的家人和朋友们,没有你们的支持,我无法度过这长达四年的写作过程。你们是最棒的。

Part I. Foundations of Data Systems

The first four chapters go through the fundamental ideas that apply to all data systems, whether running on a single machine or distributed across a cluster of machines:

前四章介绍了适用于所有数据系统的基本思想,无论是在单台计算机上运行还是分布在一组计算机上。

-

Chapter 1 introduces the terminology and approach that we’re going to use throughout this book. It examines what we actually mean by words like reliability , scalability , and maintainability , and how we can try to achieve these goals.

第1章介绍了我们在本书中将要使用的术语和方法。它探讨了我们实际上是什么意思,如可靠性、可扩展性和可维护性等词,以及我们如何努力实现这些目标。

-

Chapter 2 compares several different data models and query languages—the most visible distinguishing factor between databases from a developer’s point of view. We will see how different models are appropriate to different situations.

第二章比较了几种不同的数据模型和查询语言——这是从开发人员的角度来看,数据库之间最显著的区别因素。我们将看到不同的模型适用于不同的情况。

-

Chapter 3 turns to the internals of storage engines and looks at how databases lay out data on disk. Different storage engines are optimized for different workloads, and choosing the right one can have a huge effect on performance.

第3章介绍了存储引擎的内部机制,并探讨了数据库在磁盘上如何布置数据。不同的存储引擎针对不同的工作负载进行了优化,选择正确的引擎可以对性能产生巨大影响。

-

Chapter 4 compares various formats for data encoding (serialization) and especially examines how they fare in an environment where application requirements change and schemas need to adapt over time.

第4章比较了不同的数据编码格式(序列化),特别是在应用需求变化和模式需要随时间调整的环境中它们的表现。

Later, Part II will turn to the particular issues of distributed data systems.

接下来,第二部分将转向分布式数据系统的特定问题。

Chapter 1. Reliable, Scalable, and Maintainable Applications

The Internet was done so well that most people think of it as a natural resource like the Pacific Ocean, rather than something that was man-made. When was the last time a technology with a scale like that was so error-free?

互联网做得非常出色,以至于大多数人认为它是像太平洋这样的自然资源,而不是人造的东西。上一次有这样规模的技术是什么时候出现得如此无误呢?

Alan Kay , in interview with Dr Dobb’s Journal (2012)

艾伦·凯(Alan Kay),2012年接受Dr Dobb’s Journal采访。

Many applications today are data-intensive , as opposed to compute-intensive . Raw CPU power is rarely a limiting factor for these applications—bigger problems are usually the amount of data, the complexity of data, and the speed at which it is changing.

今天许多应用程序都是数据密集型的,相比计算密集型的。对于这些应用程序,原始CPU功率很少是限制因素 - 更大的问题通常是数据量,数据的复杂性以及其更改速度。

A data-intensive application is typically built from standard building blocks that provide commonly needed functionality. For example, many applications need to:

一个数据密集型应用通常是由提供常见功能的标准构建块构建而成。例如,许多应用程序需要:

-

Store data so that they, or another application, can find it again later ( databases )

将数据存储起来,以便它们或其他应用程序可以在以后找到它(数据库)

-

Remember the result of an expensive operation, to speed up reads ( caches )

记住昂贵操作的结果以加快读取速度(缓存)。

-

Allow users to search data by keyword or filter it in various ways ( search indexes )

允许用户通过关键字或不同的方式过滤数据(搜索索引)。

-

Send a message to another process, to be handled asynchronously ( stream processing )

发送消息到另一个进程,进行异步处理(流处理)。

-

Periodically crunch a large amount of accumulated data ( batch processing )

定期处理大量累积的数据(批处理)

If that sounds painfully obvious, that’s just because these data systems are such a successful abstraction: we use them all the time without thinking too much. When building an application, most engineers wouldn’t dream of writing a new data storage engine from scratch, because databases are a perfectly good tool for the job.

如果听起来非常显然,那只是因为这些数据系统是一种非常成功的抽象:我们经常在不过多思考的情况下使用它们。在构建应用程序时,大多数工程师不会想象从头开始编写新的数据存储引擎,因为数据库是完成工作的完美工具。

But reality is not that simple. There are many database systems with different characteristics, because different applications have different requirements. There are various approaches to caching, several ways of building search indexes, and so on. When building an application, we still need to figure out which tools and which approaches are the most appropriate for the task at hand. And it can be hard to combine tools when you need to do something that a single tool cannot do alone.

然而现实并不是那么简单的。有很多具有不同特征的数据库系统,因为不同的应用有不同的要求。有各种各样的缓存方法,几种建立搜索索引的方法等等。在构建应用程序时,我们仍然需要弄清楚哪些工具和方法最适合手头的任务。当您需要做一些单个工具无法完成的任务时,很难组合工具。

This book is a journey through both the principles and the practicalities of data systems, and how you can use them to build data-intensive applications. We will explore what different tools have in common, what distinguishes them, and how they achieve their characteristics.

这本书带你穿越数据系统的原则和实践,以及如何使用它们构建数据密集型应用。我们将探讨不同工具的共同点、区别以及它们如何实现其特性。

In this chapter, we will start by exploring the fundamentals of what we are trying to achieve: reliable, scalable, and maintainable data systems. We’ll clarify what those things mean, outline some ways of thinking about them, and go over the basics that we will need for later chapters. In the following chapters we will continue layer by layer, looking at different design decisions that need to be considered when working on a data-intensive application.

在本章中,我们将首先探讨我们所致力于实现的基本原理:可靠、可扩展和可维护的数据系统。我们将澄清这些事物的含义,概述一些思考方式,并介绍我们后续章节所需要的基础知识。在接下来的章节中,我们将逐层深入,探讨处理数据密集型应用时需要考虑的不同设计决策。

Thinking About Data Systems

We typically think of databases, queues, caches, etc. as being very different categories of tools. Although a database and a message queue have some superficial similarity—both store data for some time—they have very different access patterns, which means different performance characteristics, and thus very different implementations.

我们通常认为数据库、队列、缓存等是非常不同的工具类别。 尽管数据库和消息队列有一些表面的相似之处——都可以存储数据一段时间——但它们具有非常不同的访问模式,这意味着不同的性能特征,因此实现也非常不同。

So why should we lump them all together under an umbrella term like data systems ?

那么我们为什么要把它们都归为一个数据系统的总称呢?

Many new tools for data storage and processing have emerged in recent years. They are optimized for a variety of different use cases, and they no longer neatly fit into traditional categories [ 1 ]. For example, there are datastores that are also used as message queues (Redis), and there are message queues with database-like durability guarantees (Apache Kafka). The boundaries between the categories are becoming blurred.

近年来涌现了许多新的数据存储和处理工具。它们针对不同的使用情境进行了优化,并不再简单地符合传统分类[1]。例如,有些数据存储库也用作消息队列(Redis),有些消息队列具备类似数据库的耐久性保证(Apache Kafka)。各种类别的边界变得模糊不清。

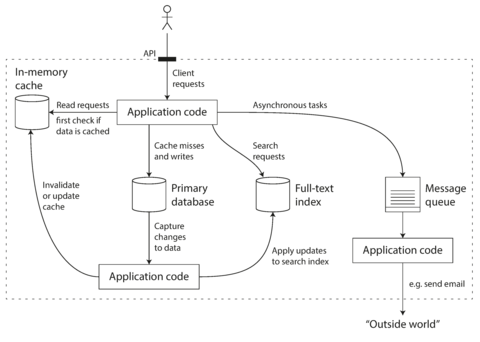

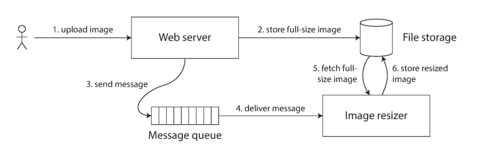

Secondly, increasingly many applications now have such demanding or wide-ranging requirements that a single tool can no longer meet all of its data processing and storage needs. Instead, the work is broken down into tasks that can be performed efficiently on a single tool, and those different tools are stitched together using application code.

其次,越来越多的应用程序现在拥有如此苛刻或广泛的要求,以至于单个工具不能再满足其所有数据处理和存储需求。相反,工作被分解为可以在单个工具上高效执行的任务,并使用应用代码将这些不同的工具组合在一起。

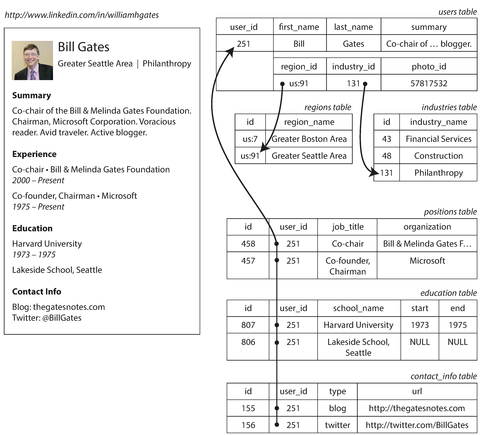

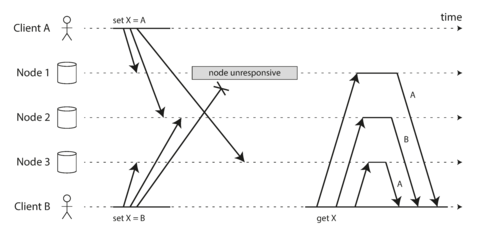

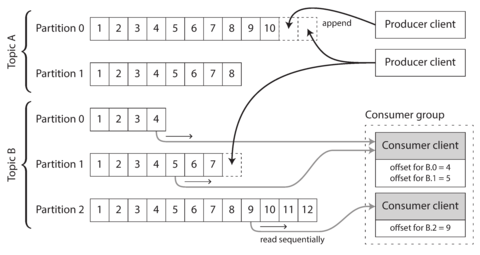

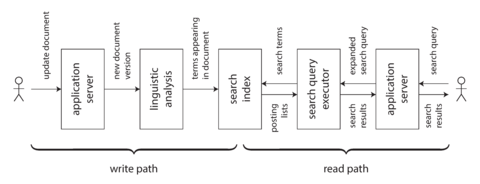

For example, if you have an application-managed caching layer (using Memcached or similar), or a full-text search server (such as Elasticsearch or Solr) separate from your main database, it is normally the application code’s responsibility to keep those caches and indexes in sync with the main database. Figure 1-1 gives a glimpse of what this may look like (we will go into detail in later chapters).

例如,如果您拥有一个应用程序管理的缓存层(使用Memcached或类似工具)或一个与您的主数据库分开的全文搜索服务器(例如Elasticsearch或Solr),通常应用程序代码需要负责保持这些缓存和索引与主数据库同步。图1-1展示了这可能是什么样子(我们会在后面的章节详细说明)。

Figure 1-1. One possible architecture for a data system that combines several components .

When you combine several tools in order to provide a service, the service’s interface or application programming interface (API) usually hides those implementation details from clients. Now you have essentially created a new, special-purpose data system from smaller, general-purpose components. Your composite data system may provide certain guarantees: e.g., that the cache will be correctly invalidated or updated on writes so that outside clients see consistent results. You are now not only an application developer, but also a data system designer.

当你将几个工具组合在一起以提供服务时,服务的界面或应用程序接口(API)通常会从客户端隐藏这些实现细节。现在,您基本上从较小的通用组件创建了一个新的特定用途的数据系统。您的组合数据系统可能提供某些保证:例如,缓存将在写操作时正确失效或更新,以便外部客户端看到一致的结果。现在,您不仅是应用程序开发人员,还是数据系统设计师。

If you are designing a data system or service, a lot of tricky questions arise. How do you ensure that the data remains correct and complete, even when things go wrong internally? How do you provide consistently good performance to clients, even when parts of your system are degraded? How do you scale to handle an increase in load? What does a good API for the service look like?

如果您正在设计数据系统或服务,会出现很多棘手的问题。如何保证数据即使出错,也仍正确和完整?如何确保在系统部分出现问题时,能为客户提供一致良好的性能?如何扩展以处理负载的增加?服务的良好API是什么样的?

There are many factors that may influence the design of a data system, including the skills and experience of the people involved, legacy system dependencies, the timescale for delivery, your organization’s tolerance of different kinds of risk, regulatory constraints, etc. Those factors depend very much on the situation.

有许多因素可能影响数据系统的设计,包括涉及的人员的技能和经验,遗留系统的依赖性,交付时间表,组织对不同风险容忍度,监管限制等。这些因素在很大程度上取决于具体情况。

In this book, we focus on three concerns that are important in most software systems:

在这本书中,我们关注的是大多数软件系统中重要的三个问题:

- Reliability

-

The system should continue to work correctly (performing the correct function at the desired level of performance) even in the face of adversity (hardware or software faults, and even human error). See “Reliability” .

系统应该在逆境中继续正常工作(保持所需的性能水平执行正确的功能),甚至在硬件或软件故障和人为错误的情况下也是如此。请参见“可靠性”。

- Scalability

-

As the system grows (in data volume, traffic volume, or complexity), there should be reasonable ways of dealing with that growth. See “Scalability” .

随着系统规模的增长(数据量、流量或复杂性),应有合理的处理方式。参见“可扩展性”。

- Maintainability

-

Over time, many different people will work on the system (engineering and operations, both maintaining current behavior and adapting the system to new use cases), and they should all be able to work on it productively . See “Maintainability” .

随着时间的推移,许多不同的人将在系统上工作(包括工程和运营,维护当前行为并适应新的用例),他们都应该能够以高效的方式工作。请参见:“可维护性”。

These words are often cast around without a clear understanding of what they mean. In the interest of thoughtful engineering, we will spend the rest of this chapter exploring ways of thinking about reliability, scalability, and maintainability. Then, in the following chapters, we will look at various techniques, architectures, and algorithms that are used in order to achieve those goals.

这些词经常被使用,然而人们往往缺乏明确的理解。为了进行深入的工程思考,我们将花费整个章节探究可靠性、可扩展性和可维护性的思考方式。紧接着,在下一章中,我们将探讨在实现这些目标时所使用的各种技术、架构和算法。

Reliability

Everybody has an intuitive idea of what it means for something to be reliable or unreliable. For software, typical expectations include:

每个人都对什么是可靠或不可靠的东西有一个直观的想法。对于软件来说,典型的期望包括:

-

The application performs the function that the user expected.

应用程序执行用户期望的功能。

-

It can tolerate the user making mistakes or using the software in unexpected ways.

它可以容忍用户犯错误或以意想不到的方式使用软件。

-

Its performance is good enough for the required use case, under the expected load and data volume.

它的表现足够好,以满足预期的负荷和数据量的要求。

-

The system prevents any unauthorized access and abuse.

该系统防止未经授权的访问和滥用。

If all those things together mean “working correctly,” then we can understand reliability as meaning, roughly, “continuing to work correctly, even when things go wrong.”

如果所有这些事情一起意味着“正常工作”,那么我们可以将可靠性大致理解为“即使在出现故障时仍能正确工作”。

The things that can go wrong are called faults , and systems that anticipate faults and can cope with them are called fault-tolerant or resilient . The former term is slightly misleading: it suggests that we could make a system tolerant of every possible kind of fault, which in reality is not feasible. If the entire planet Earth (and all servers on it) were swallowed by a black hole, tolerance of that fault would require web hosting in space—good luck getting that budget item approved. So it only makes sense to talk about tolerating certain types of faults.

能够出错的事情被称为故障,而能够预见并应对故障的系统被称为容错或弹性系统。前者的术语略有误导:它暗示我们可以使系统容忍每种可能的故障,但实际上这是不可行的。如果整个地球(和所有服务器)都被黑洞吞噬,要容忍那种故障就需要在太空中进行网络托管——但很难拿到预算。因此,只有谈论容忍某些类型的故障才有意义。

Note that a fault is not the same as a failure [ 2 ]. A fault is usually defined as one component of the system deviating from its spec, whereas a failure is when the system as a whole stops providing the required service to the user. It is impossible to reduce the probability of a fault to zero; therefore it is usually best to design fault-tolerance mechanisms that prevent faults from causing failures. In this book we cover several techniques for building reliable systems from unreliable parts.

请注意,故障与失效不同。[2] 故障通常被定义为系统中的一个组件偏离其规范,而失效是指整个系统停止向用户提供所需的服务。将故障的概率降至零是不可能的,因此通常最好设计能够防止故障导致失效的容错机制。在本书中,我们介绍了几种利用不可靠部件构建可靠系统的技术。

Counterintuitively, in such fault-tolerant systems, it can make sense to increase the rate of faults by triggering them deliberately—for example, by randomly killing individual processes without warning. Many critical bugs are actually due to poor error handling [ 3 ]; by deliberately inducing faults, you ensure that the fault-tolerance machinery is continually exercised and tested, which can increase your confidence that faults will be handled correctly when they occur naturally. The Netflix Chaos Monkey [ 4 ] is an example of this approach.

在这样的容错系统中,出乎意料的是,通过故意触发它们,例如随机杀死单个进程而不发出警告,可能增加故障率是有意义的。许多关键错误实际上是由于错误处理不当引起的;通过故意引发故障,可以确保容错机制不断得到运作和测试,从而增加您对自然发生故障时正确处理故障的信心。Netflix的混沌猴就是这种方法的例子。

Although we generally prefer tolerating faults over preventing faults, there are cases where prevention is better than cure (e.g., because no cure exists). This is the case with security matters, for example: if an attacker has compromised a system and gained access to sensitive data, that event cannot be undone. However, this book mostly deals with the kinds of faults that can be cured, as described in the following sections.

虽然我们通常更喜欢容忍错误而不是预防错误,但在有些情况下,预防优于治疗(例如,因为没有治愈方法)。安全问题就是这种情况的例子:如果攻击者侵犯了某个系统并获得了敏感数据的访问权限,那么该事件就无法撤消。然而,本书大多数讨论的是可以治愈的故障类型,如下面的章节所描述的那样。

Hardware Faults

When we think of causes of system failure, hardware faults quickly come to mind. Hard disks crash, RAM becomes faulty, the power grid has a blackout, someone unplugs the wrong network cable. Anyone who has worked with large datacenters can tell you that these things happen all the time when you have a lot of machines.

当我们考虑系统故障的原因时,硬件故障很快就会跳入脑海。硬盘崩溃,内存损坏,电网停电,有人拔了错误的网络电缆。任何曾在大型数据中心工作过的人都可以告诉你,当你有很多机器时,这些事情经常发生。

Hard disks are reported as having a mean time to failure (MTTF) of about 10 to 50 years [ 5 , 6 ]. Thus, on a storage cluster with 10,000 disks, we should expect on average one disk to die per day.

硬盘通常被报告有一个平均故障时间(MTTF)约为10到50年[5, 6]。因此,在一个拥有10,000个硬盘的存储群集中,我们平均每天应该期望有一块硬盘损坏。

Our first response is usually to add redundancy to the individual hardware components in order to reduce the failure rate of the system. Disks may be set up in a RAID configuration, servers may have dual power supplies and hot-swappable CPUs, and datacenters may have batteries and diesel generators for backup power. When one component dies, the redundant component can take its place while the broken component is replaced. This approach cannot completely prevent hardware problems from causing failures, but it is well understood and can often keep a machine running uninterrupted for years.

我们通常的第一反应是将冗余添加到个别硬件组件中,以降低系统的故障率。可以将磁盘设置为RAID配置,服务器可以拥有双重电源和可热插拔的CPU,数据中心可以拥有备用电源的电池和柴油发电机。当一个组件出现故障时,冗余组件可以代替它的位置,而破损的组件则需要被更换。这种方法不能完全防止硬件问题导致故障,但是它是众所周知的,并且通常可以使一台机器连续运行多年。

Until recently, redundancy of hardware components was sufficient for most applications, since it makes total failure of a single machine fairly rare. As long as you can restore a backup onto a new machine fairly quickly, the downtime in case of failure is not catastrophic in most applications. Thus, multi-machine redundancy was only required by a small number of applications for which high availability was absolutely essential.

直到最近,硬件组件的冗余对于大多数应用程序来说已经足够,因为它使单台机器的完全失效变得相当罕见。只要能够相当快地将备份恢复到新机器上,在大多数应用程序中,发生故障时停机时间并不是灾难性的。因此,仅有少数应用程序需要多台机器的冗余,这对于绝对必要的高可用性是必需的。

However, as data volumes and applications’ computing demands have increased, more applications have begun using larger numbers of machines, which proportionally increases the rate of hardware faults. Moreover, in some cloud platforms such as Amazon Web Services (AWS) it is fairly common for virtual machine instances to become unavailable without warning [ 7 ], as the platforms are designed to prioritize flexibility and elasticity i over single-machine reliability.

然而,随着数据量和应用程序的计算需求增加,更多的应用程序开始使用更多的机器,这些机器的故障率也相应增加。此外,在一些云平台,例如AWS,虚拟机实例经常会在没有警告的情况下变得不可用[7],因为这些平台的设计更注重灵活性和弹性而不是单机可靠性。

Hence there is a move toward systems that can tolerate the loss of entire machines, by using software fault-tolerance techniques in preference or in addition to hardware redundancy. Such systems also have operational advantages: a single-server system requires planned downtime if you need to reboot the machine (to apply operating system security patches, for example), whereas a system that can tolerate machine failure can be patched one node at a time, without downtime of the entire system (a rolling upgrade ; see Chapter 4 ).

因此,现在越来越倾向于使用软件容错技术来替代或补充硬件冗余,以实现整个机器的失效容忍系统。这样的系统还具有操作上的优势:单服务器系统需要计划停机时间才能重新启动机器(例如,应用操作系统安全补丁),而能够容忍机器故障的系统可以逐个升级节点而不需要整个系统停机(滚动升级;参见第四章)。

Software Errors

We usually think of hardware faults as being random and independent from each other: one machine’s disk failing does not imply that another machine’s disk is going to fail. There may be weak correlations (for example due to a common cause, such as the temperature in the server rack), but otherwise it is unlikely that a large number of hardware components will fail at the same time.

通常我们认为硬件故障是随机和相互独立的:一台机器的硬盘故障并不意味着另一台机器的硬盘会发生故障。可能存在一些弱相关性(例如由于共同的原因,例如服务器机架中的温度),但除此之外,大量硬件组件同时发生故障的可能性很小。

Another class of fault is a systematic error within the system [ 8 ]. Such faults are harder to anticipate, and because they are correlated across nodes, they tend to cause many more system failures than uncorrelated hardware faults [ 5 ]. Examples include:

另一类故障是系统内的系统性错误[8]。 这种故障很难预测,因为它们在节点之间存在相关性,因此它们往往比不相关的硬件故障导致更多的系统故障[5]。 例如:

-

A software bug that causes every instance of an application server to crash when given a particular bad input. For example, consider the leap second on June 30, 2012, that caused many applications to hang simultaneously due to a bug in the Linux kernel [ 9 ].

一个软件错误导致每个应用服务器在接收到特定的错误输入后都会崩溃。例如,考虑2012年6月30日的闰秒导致许多应用程序同时挂起,原因是Linux内核的错误[9]。

-

A runaway process that uses up some shared resource—CPU time, memory, disk space, or network bandwidth.

一个耗尽了一些共享资源的失控进程——CPU时间、内存、磁盘空间或网络带宽。

-

A service that the system depends on that slows down, becomes unresponsive, or starts returning corrupted responses.

系统依赖的服务变慢、无响应或返回错误响应。

-

Cascading failures, where a small fault in one component triggers a fault in another component, which in turn triggers further faults [ 10 ].

级联故障,一个组件的小故障会引发另一个组件的故障,接着又会触发更多的故障[10]。

The bugs that cause these kinds of software faults often lie dormant for a long time until they are triggered by an unusual set of circumstances. In those circumstances, it is revealed that the software is making some kind of assumption about its environment—and while that assumption is usually true, it eventually stops being true for some reason [ 11 ].

导致这些软件故障的错误通常会在很长一段时间内处于休眠状态,直到它们被一组不寻常的情况激活。在那种情况下,软件会揭示出它对环境做出某种假设——虽然这个假设通常是正确的,但它最终由于某种原因停止成立[11]。

There is no quick solution to the problem of systematic faults in software. Lots of small things can help: carefully thinking about assumptions and interactions in the system; thorough testing; process isolation; allowing processes to crash and restart; measuring, monitoring, and analyzing system behavior in production. If a system is expected to provide some guarantee (for example, in a message queue, that the number of incoming messages equals the number of outgoing messages), it can constantly check itself while it is running and raise an alert if a discrepancy is found [ 12 ].

软件系统存在系统性故障问题并非能立即解决。有许多小方法可以帮助解决:认真考虑系统中的假设和交互作用;进行彻底的测试;隔离处理;允许进程崩溃和重新启动;在生产中测量、监控和分析系统的行为。如果一个系统要提供某种保证(例如,在消息队列中,输入消息数等于输出消息数),它可以在运行时不断检查本身,并在发现差异时发出警报 [12]。

Human Errors

Humans design and build software systems, and the operators who keep the systems running are also human. Even when they have the best intentions, humans are known to be unreliable. For example, one study of large internet services found that configuration errors by operators were the leading cause of outages, whereas hardware faults (servers or network) played a role in only 10–25% of outages [ 13 ].

人类设计和构建软件系统,保持系统运行的操作员也是人类。即使他们有最好的意图,人类被证明是不可靠的。例如,一项针对大型互联网服务的研究发现,运营商的配置错误是停机的主要原因,而硬件故障(服务器或网络)仅在10-25%的停机中发挥作用[13]。

How do we make our systems reliable, in spite of unreliable humans? The best systems combine several approaches:

我们如何在人类不可靠的情况下使我们的系统可靠?最好的系统结合了几种方法:

-

Design systems in a way that minimizes opportunities for error. For example, well-designed abstractions, APIs, and admin interfaces make it easy to do “the right thing” and discourage “the wrong thing.” However, if the interfaces are too restrictive people will work around them, negating their benefit, so this is a tricky balance to get right.

以最小化错误机会的方式设计系统。例如,设计良好的抽象、API和管理界面可以使“正确的事情”变得容易,并避免“错误的事情”。然而,如果接口太严格,人们会绕过它们,抵消其好处,因此这是一个棘手的平衡问题。

-

Decouple the places where people make the most mistakes from the places where they can cause failures. In particular, provide fully featured non-production sandbox environments where people can explore and experiment safely, using real data, without affecting real users.

将人们经常犯错的地方与可能导致失败的地方分离开来。特别是,提供完整功能的非生产沙盒环境,人们可以在其中安全地探索和实验,使用真实数据,而不会影响真实用户。

-

Test thoroughly at all levels, from unit tests to whole-system integration tests and manual tests [ 3 ]. Automated testing is widely used, well understood, and especially valuable for covering corner cases that rarely arise in normal operation.

彻底测试各个层面,从单元测试到整个系统集成测试和手动测试[3]。自动化测试被广泛使用,被很好地理解,并且在覆盖在正常操作中很少出现的边缘情况时尤为有价值。

-

Allow quick and easy recovery from human errors, to minimize the impact in the case of a failure. For example, make it fast to roll back configuration changes, roll out new code gradually (so that any unexpected bugs affect only a small subset of users), and provide tools to recompute data (in case it turns out that the old computation was incorrect).

允许快速轻松地从人为错误中恢复,以最小化故障的影响。例如,快速回滚配置更改,逐步推出新代码(以便任何意外错误只影响一小部分用户),并提供重新计算数据的工具(以防旧计算结果不正确)。

-

Set up detailed and clear monitoring, such as performance metrics and error rates. In other engineering disciplines this is referred to as telemetry . (Once a rocket has left the ground, telemetry is essential for tracking what is happening, and for understanding failures [ 14 ].) Monitoring can show us early warning signals and allow us to check whether any assumptions or constraints are being violated. When a problem occurs, metrics can be invaluable in diagnosing the issue.

建立详细和清晰的监控,例如性能指标和错误率。在其他工程学科中,这被称为遥测。 (一旦火箭离开地面,遥测对于跟踪发生的情况和理解故障至关重要[14]。)监测可以向我们显示早期警告信号,并允许我们检查是否违反任何假设或约束。当问题发生时,指标可以非常有价值地诊断问题。

-

Implement good management practices and training—a complex and important aspect, and beyond the scope of this book.

实施良好的管理实践和培训——这是一个复杂而重要的方面,超出本书的范围。

How Important Is Reliability?

Reliability is not just for nuclear power stations and air traffic control software—more mundane applications are also expected to work reliably. Bugs in business applications cause lost productivity (and legal risks if figures are reported incorrectly), and outages of ecommerce sites can have huge costs in terms of lost revenue and damage to reputation.

可靠性不仅针对核电站和空中交通控制软件——更普通的应用程序同样需要保证可靠运行。商业应用程序中的漏洞会导致生产力下降(如果数字报告不正确,还会面临法律风险),电子商务网站的停机则会造成巨额损失,且声誉受损。

Even in “noncritical” applications we have a responsibility to our users. Consider a parent who stores all their pictures and videos of their children in your photo application [ 15 ]. How would they feel if that database was suddenly corrupted? Would they know how to restore it from a backup?

即使在“非关键”应用程序中,我们也有责任对我们的用户负责。考虑一个将他们所有的孩子的照片和视频存储在你的照片应用程序[15]中的父母。如果该数据库突然损坏,他们会感觉如何?他们知道如何从备份中恢复它吗?

There are situations in which we may choose to sacrifice reliability in order to reduce development cost (e.g., when developing a prototype product for an unproven market) or operational cost (e.g., for a service with a very narrow profit margin)—but we should be very conscious of when we are cutting corners.

在某些情况下,我们可能选择在可靠性方面做出牺牲,以降低开发成本(例如,针对未经证实的市场开发原型产品)或运营成本(例如,针对利润非常微薄的服务)——但我们应该非常清醒地意识到我们是在削减开销。

Scalability

Even if a system is working reliably today, that doesn’t mean it will necessarily work reliably in the future. One common reason for degradation is increased load: perhaps the system has grown from 10,000 concurrent users to 100,000 concurrent users, or from 1 million to 10 million. Perhaps it is processing much larger volumes of data than it did before.

即使系统今天能够可靠地运行,也不意味着它未来也能保持可靠性。一个常见的退化原因是负载增加:可能系统从10,000个并发用户增长到100,000个并发用户,或者从1百万增长到1千万。也许它正在处理比以前更大量的数据。

Scalability is the term we use to describe a system’s ability to cope with increased load. Note, however, that it is not a one-dimensional label that we can attach to a system: it is meaningless to say “X is scalable” or “Y doesn’t scale.” Rather, discussing scalability means considering questions like “If the system grows in a particular way, what are our options for coping with the growth?” and “How can we add computing resources to handle the additional load?”

可扩展性是我们用来描述系统处理增加负载的能力的术语。然而,需要注意的是,它不是我们可以给系统附加的一维标签:说“X是可扩展的”或“Y不可扩展”是没有意义的。相反,讨论可扩展性意味着考虑问题,例如“如果系统以特定方式增长,我们处理增长的选择是什么?”和“我们如何添加计算资源来处理额外的负载?”

Describing Load

First, we need to succinctly describe the current load on the system; only then can we discuss growth questions (what happens if our load doubles?). Load can be described with a few numbers which we call load parameters . The best choice of parameters depends on the architecture of your system: it may be requests per second to a web server, the ratio of reads to writes in a database, the number of simultaneously active users in a chat room, the hit rate on a cache, or something else. Perhaps the average case is what matters for you, or perhaps your bottleneck is dominated by a small number of extreme cases.

首先,我们需要简洁地描述系统上的当前负载;只有这样我们才能讨论增长问题(如果我们的负载翻倍会发生什么?)。负载可以用一些数字来描述,我们称之为负载参数。参数的最佳选择取决于您系统的架构:可能是每秒钟对Web服务器的请求,数据库中读写比例,聊天室中的同时活跃用户数量,缓存命中率或其他内容。也许对您来说平均情况最重要,或者您的瓶颈由少数几个极端情况主导。

To make this idea more concrete, let’s consider Twitter as an example, using data published in November 2012 [ 16 ]. Two of Twitter’s main operations are:

为了使这个想法更具体化,让我们以Twitter为例,使用2012年11月发表的数据[16]。 Twitter的两个主要操作是:

- Post tweet

-

A user can publish a new message to their followers (4.6k requests/sec on average, over 12k requests/sec at peak).

一个用户可以向他们的关注者发布一条新信息(平均每秒4.6k个请求,峰值时每秒超过12k个请求)。

- Home timeline

-

A user can view tweets posted by the people they follow (300k requests/sec).

一个用户可以查看其关注者发布的推文(每秒300k次请求)。

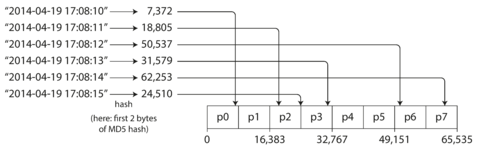

Simply handling 12,000 writes per second (the peak rate for posting tweets) would be fairly easy. However, Twitter’s scaling challenge is not primarily due to tweet volume, but due to fan-out ii —each user follows many people, and each user is followed by many people. There are broadly two ways of implementing these two operations:

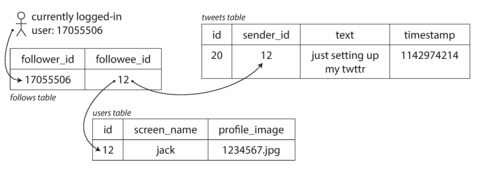

处理每秒12000次写入(发布推文的峰值速率)将相当容易。然而,Twitter的扩展挑战不主要是由于推文数量,而是由于扇出-每个用户都关注许多人,每个用户都被许多人关注。实现这两个操作通常有两种方式:

-

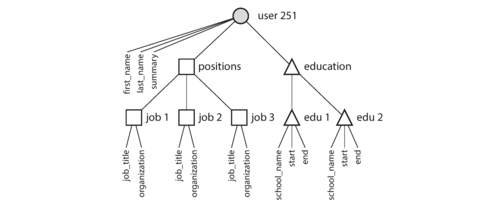

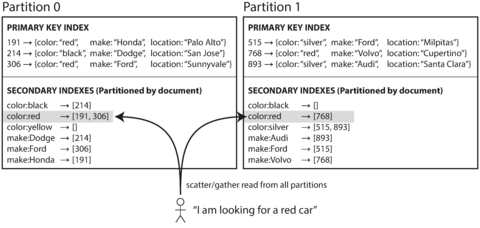

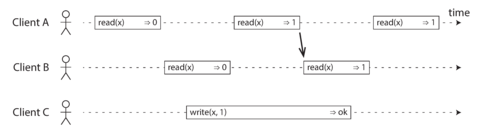

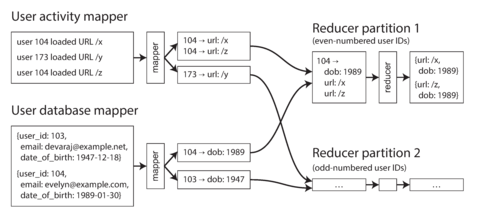

Posting a tweet simply inserts the new tweet into a global collection of tweets. When a user requests their home timeline, look up all the people they follow, find all the tweets for each of those users, and merge them (sorted by time). In a relational database like in Figure 1-2 , you could write a query such as:

发布一条推文只会将其插入到全球推文集合中。当用户请求其主页时间线时,找出他们关注的所有人,找到每个用户的所有推文,并合并它们(按时间排序)。在关系型数据库中,可以编写查询语句,例如图1-2中的查询语句:

SELECTtweets.*,users.*FROMtweetsJOINusersONtweets.sender_id=users.idJOINfollowsONfollows.followee_id=users.idWHEREfollows.follower_id=current_user -

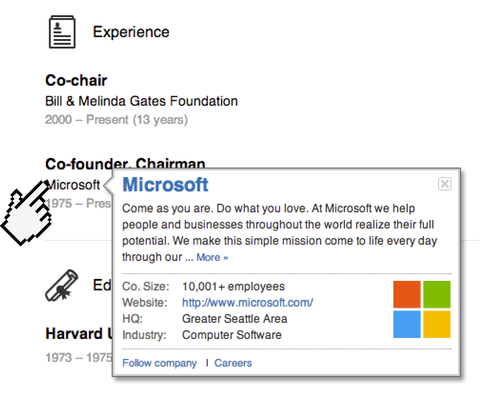

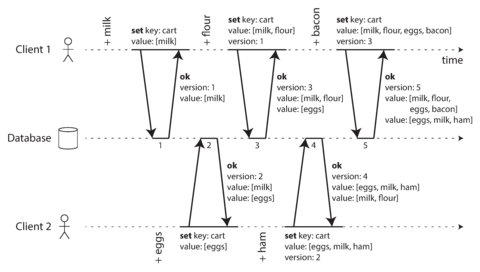

Maintain a cache for each user’s home timeline—like a mailbox of tweets for each recipient user (see Figure 1-3 ). When a user posts a tweet , look up all the people who follow that user, and insert the new tweet into each of their home timeline caches. The request to read the home timeline is then cheap, because its result has been computed ahead of time.

为每个用户的主页时间线维护一个缓存——就像每个接受者用户的推文邮箱(参见图1-3)。当用户发布一条推文时,查找所有关注该用户的人,并将新的推文插入到他们每个人的主页时间线缓存中。因为结果已经提前计算出来,所以读取主页时间线的请求非常便宜。

Figure 1-2. Simple relational schema for implementing a Twitter home timeline.

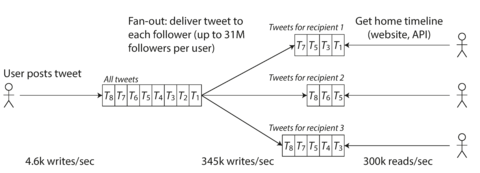

Figure 1-3. Twitter’s data pipeline for delivering tweets to followers, with load parameters as of November 2012 [ 16 ].

The first version of Twitter used approach 1, but the systems struggled to keep up with the load of home timeline queries, so the company switched to approach 2. This works better because the average rate of published tweets is almost two orders of magnitude lower than the rate of home timeline reads, and so in this case it’s preferable to do more work at write time and less at read time.

Twitter的第一版使用方法1,但是那时系统很难跟上主页时间轴查询的负载,所以公司转而使用方法2。这个方法效果更好,因为发布推文的平均速率比主页时间轴读取的速率低了近两个数量级,所以在这种情况下,在写入时做更多的工作,而在读取时做更少的工作更好。

However, the downside of approach 2 is that posting a tweet now requires a lot of extra work. On average, a tweet is delivered to about 75 followers, so 4.6k tweets per second become 345k writes per second to the home timeline caches. But this average hides the fact that the number of followers per user varies wildly, and some users have over 30 million followers. This means that a single tweet may result in over 30 million writes to home timelines! Doing this in a timely manner—Twitter tries to deliver tweets to followers within five seconds—is a significant challenge.

然而,方法2的缺点是现在发布推文需要额外的工作量。平均而言,一条推文会被传递给约75个关注者,因此每秒4.6k条推文就变成了对主页时间轴缓存的345k次写入。但这个平均数隐藏了一个事实,即每个用户的关注者数量差异很大,有些用户拥有超过3000万的关注者。这意味着一条推文可能会导致3000万次对主页时间轴的写入!而在Twitter尝试在五秒内向关注者传递推文的情况下及时完成这项工作是一个巨大的挑战。

In the example of Twitter, the distribution of followers per user (maybe weighted by how often those users tweet) is a key load parameter for discussing scalability, since it determines the fan-out load. Your application may have very different characteristics, but you can apply similar principles to reasoning about its load.

以 Twitter 为例,用户的关注者分布(可能根据其推文频率加权)是讨论可扩展性的关键负载参数,因为它决定了扇出负载。你的应用程序可能具有非常不同的特点,但你可以应用类似的原则来推理它的负载。

The final twist of the Twitter anecdote: now that approach 2 is robustly implemented, Twitter is moving to a hybrid of both approaches. Most users’ tweets continue to be fanned out to home timelines at the time when they are posted, but a small number of users with a very large number of followers (i.e., celebrities) are excepted from this fan-out. Tweets from any celebrities that a user may follow are fetched separately and merged with that user’s home timeline when it is read, like in approach 1. This hybrid approach is able to deliver consistently good performance. We will revisit this example in Chapter 12 after we have covered some more technical ground.

发推特轶事的最后转折:现在实现了方法2,推特正在朝着两种方法的混合方式迈进。大多数用户的推特在发布时仍会在主页时间轴上扇出,但有一小部分拥有大量粉丝(即名人)的用户会被例外。用户可能会关注的任何名人的推特会单独获取并在读取时与该用户的主页时间轴合并,类似于方法1。这种混合方法能够提供一致的良好性能。在我们覆盖了一些更技术性的地面后,我们将在第12章重新审视这个例子。

Describing Performance

Once you have described the load on your system, you can investigate what happens when the load increases. You can look at it in two ways:

一旦你描述了系统的负载,你就可以调查当负载增加时会发生什么。你可以从两个方面来看待它:

-

When you increase a load parameter and keep the system resources (CPU, memory, network bandwidth, etc.) unchanged, how is the performance of your system affected?

当您增加负载参数并保持系统资源(CPU,内存,网络带宽等)不变时,系统的性能会受到影响吗?

-

When you increase a load parameter, how much do you need to increase the resources if you want to keep performance unchanged?

当你增加负载参数时,如果想保持性能不变,需要增加多少资源?

Both questions require performance numbers, so let’s look briefly at describing the performance of a system.

两个问题都需要性能数字,因此让我们简要地描述一下系统的性能。

In a batch processing system such as Hadoop, we usually care about throughput —the number of records we can process per second, or the total time it takes to run a job on a dataset of a certain size. iii In online systems, what’s usually more important is the service’s response time —that is, the time between a client sending a request and receiving a response.

在像Hadoop这样的批处理系统中,我们通常关心吞吐量——每秒可以处理的记录数量,或者在特定大小的数据集上运行作业所需的总时间。在在线系统中,更重要的是服务的响应时间——即客户端发送请求和接收响应之间的时间。

Latency and response time

Latency and response time are often used synonymously, but they are not the same. The response time is what the client sees: besides the actual time to process the request (the service time ), it includes network delays and queueing delays. Latency is the duration that a request is waiting to be handled—during which it is latent , awaiting service [ 17 ].

延迟和响应时间通常被视为同义词,但它们并不相同。响应时间是客户端所看到的:除了实际处理请求所需的时间(服务时间)之外,它还包括网络延迟和排队延迟。延迟是请求等待处理的时间,期间需要等待服务[17]。

Even if you only make the same request over and over again, you’ll get a slightly different response time on every try. In practice, in a system handling a variety of requests, the response time can vary a lot. We therefore need to think of response time not as a single number, but as a distribution of values that you can measure.

即使你一遍又一遍地做出相同的请求,每次尝试得到的响应时间也会稍有不同。在实践中,对于处理各种请求的系统,响应时间可能会有很大的差异。因此,我们需要将响应时间看作一组可以测量的值的分布,而不是一个单一的数字。

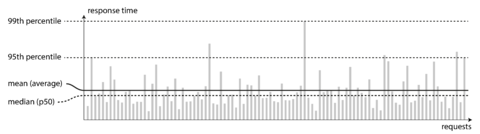

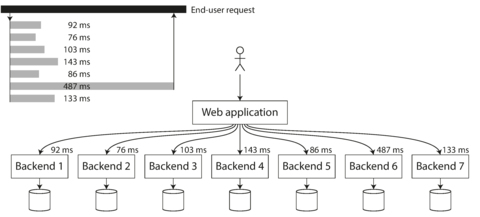

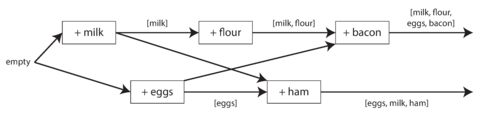

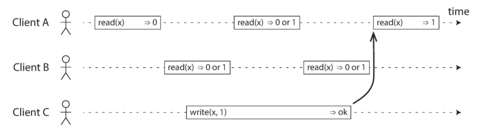

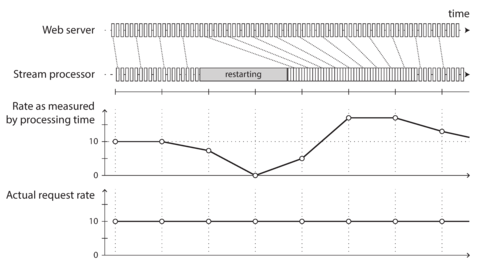

In Figure 1-4 , each gray bar represents a request to a service, and its height shows how long that request took. Most requests are reasonably fast, but there are occasional outliers that take much longer. Perhaps the slow requests are intrinsically more expensive, e.g., because they process more data. But even in a scenario where you’d think all requests should take the same time, you get variation: random additional latency could be introduced by a context switch to a background process, the loss of a network packet and TCP retransmission, a garbage collection pause, a page fault forcing a read from disk, mechanical vibrations in the server rack [ 18 ], or many other causes.

在图1-4中,每个灰色条代表对服务的请求,其高度显示了该请求所花费的时间。大多数请求的速度都比较快,但偶尔会出现一些耗时更长的异常值。也许慢请求本质上更昂贵,例如因为它们处理的数据更多。但即使在所有请求应该花费相同时间的情况下,你也会得到变化:随机额外的延迟可能会由于切换到后台进程、网络数据包的丢失和TCP重传、垃圾收集暂停、在服务器架中机械振动 [18] 或其他许多原因引入。

Figure 1-4. Illustrating mean and percentiles: response times for a sample of 100 requests to a service.

It’s common to see the average response time of a service reported. (Strictly speaking, the term “average” doesn’t refer to any particular formula, but in practice it is usually understood as the arithmetic mean : given n values, add up all the values, and divide by n .) However, the mean is not a very good metric if you want to know your “typical” response time, because it doesn’t tell you how many users actually experienced that delay.

通常会报告服务的平均响应时间。(严格来说,“平均值”一词并不指代任何特定的公式,但在实践中通常被理解为算术平均值:给定 n 个值,将所有值相加,然后除以 n。)然而,如果你想知道你的“典型”响应时间,平均数不是一个很好的度量标准,因为它不告诉你有多少个用户实际上经历了那个延迟。

Usually it is better to use percentiles . If you take your list of response times and sort it from fastest to slowest, then the median is the halfway point: for example, if your median response time is 200 ms, that means half your requests return in less than 200 ms, and half your requests take longer than that.

通常最好使用百分位数。如果您将响应时间列表按从最快到最慢进行排序,则中位数是中间点:例如,如果中位数响应时间为200毫秒,则表示您一半的请求在200毫秒以下返回,一半的请求需要更长时间。

This makes the median a good metric if you want to know how long users typically have to wait: half of user requests are served in less than the median response time, and the other half take longer than the median. The median is also known as the 50th percentile , and sometimes abbreviated as p50 . Note that the median refers to a single request; if the user makes several requests (over the course of a session, or because several resources are included in a single page), the probability that at least one of them is slower than the median is much greater than 50%.

这使得中位数成为一种良好的度量方法,如果您想知道用户通常需要等待多长时间:一半的用户请求在低于中位响应时间的情况下得到服务,另一半需要比中位数更长的时间。中位数也被称为第50个百分位数,并有时缩写为p50。请注意,中位数是指单个请求;如果用户发出多个请求(在会话期间或因为单个页面包括多个资源),则至少有一个请求慢于中位数的概率远大于50%。

In order to figure out how bad your outliers are, you can look at higher percentiles: the 95th , 99th , and 99.9th percentiles are common (abbreviated p95 , p99 , and p999 ). They are the response time thresholds at which 95%, 99%, or 99.9% of requests are faster than that particular threshold. For example, if the 95th percentile response time is 1.5 seconds, that means 95 out of 100 requests take less than 1.5 seconds, and 5 out of 100 requests take 1.5 seconds or more. This is illustrated in Figure 1-4 .

为了弄清异常数据有多严重,你可以查看更高的百分位数:95%、99%和99.9%百分位数是常见的(简写为p95、p99和p999)。它们是响应时间阈值,其中95%、99%或99.9%的请求速度比特定阈值更快。举例来说,如果95%分位数的响应时间为1.5秒,就意味着100个请求中有95个会在1.5秒内完成,而有5个需要1.5秒或更长时间。这在图1-4中有所说明。

High percentiles of response times, also known as tail latencies , are important because they directly affect users’ experience of the service. For example, Amazon describes response time requirements for internal services in terms of the 99.9th percentile, even though it only affects 1 in 1,000 requests. This is because the customers with the slowest requests are often those who have the most data on their accounts because they have made many purchases—that is, they’re the most valuable customers [ 19 ]. It’s important to keep those customers happy by ensuring the website is fast for them: Amazon has also observed that a 100 ms increase in response time reduces sales by 1% [ 20 ], and others report that a 1-second slowdown reduces a customer satisfaction metric by 16% [ 21 , 22 ].

高响应时间的百分位,也称为尾部延迟,非常重要,因为直接影响用户对服务的体验。例如,亚马逊在内部服务中以99.9百分位的响应时间要求为例,即使只影响了1,000个请求中的1个。这是因为最慢请求的客户通常是那些拥有许多账户数据的客户,因为他们进行了多次购买,即他们是最有价值的客户[19]。通过确保网站对这些客户快速,可以保持这些客户的满意度:亚马逊还观察到,响应时间增加100毫秒会导致销售额下降1%[20],其他人则报告,1秒的减速会将客户满意度指标降低16% [21,22]。

On the other hand, optimizing the 99.99th percentile (the slowest 1 in 10,000 requests) was deemed too expensive and to not yield enough benefit for Amazon’s purposes. Reducing response times at very high percentiles is difficult because they are easily affected by random events outside of your control, and the benefits are diminishing.

另一方面,优化第99.99个百分位数(最慢的10000个请求中的1个)被认为对于亚马逊的目的来说太昂贵且利益不足。在非常高的百分位数上降低响应时间很困难,因为它们很容易受到无法控制的随机事件的影响,而且效益逐渐变小。

For example, percentiles are often used in service level objectives (SLOs) and service level agreements (SLAs), contracts that define the expected performance and availability of a service. An SLA may state that the service is considered to be up if it has a median response time of less than 200 ms and a 99th percentile under 1 s (if the response time is longer, it might as well be down), and the service may be required to be up at least 99.9% of the time. These metrics set expectations for clients of the service and allow customers to demand a refund if the SLA is not met.

例如,百分位数通常用于服务水平目标(SLO)和服务水平协议(SLA),这些协议定义了服务的预期性能和可用性。SLA 可以说明,如果服务的中位响应时间小于 200 毫秒且 99 百分位数小于 1 秒,则认为该服务处于上线状态(如果响应时间更长,则可以认为已经下线),并且该服务可能需要保持至少 99.9% 的可用性。这些指标为服务的客户设定了期望,并允许客户在未达到 SLA 的情况下要求退款。

Queueing delays often account for a large part of the response time at high percentiles. As a server can only process a small number of things in parallel (limited, for example, by its number of CPU cores), it only takes a small number of slow requests to hold up the processing of subsequent requests—an effect sometimes known as head-of-line blocking . Even if those subsequent requests are fast to process on the server, the client will see a slow overall response time due to the time waiting for the prior request to complete. Due to this effect, it is important to measure response times on the client side.

排队延迟通常占高百分位响应时间的很大一部分。由于一个服务器只能处理少量并行事项(例如受其CPU核心数量限制),只需要极少量缓慢请求就足以阻塞后续请求的处理——这种效应有时被称为头阻塞。即使这些后续请求在服务器上处理很快,客户端也会因等待先前请求完成而看到较慢的总响应时间。由于此效应,客户端测量响应时间非常重要。

When generating load artificially in order to test the scalability of a system, the load-generating client needs to keep sending requests independently of the response time. If the client waits for the previous request to complete before sending the next one, that behavior has the effect of artificially keeping the queues shorter in the test than they would be in reality, which skews the measurements [ 23 ].

当人工产生负载以测试系统的可扩展性时,产生负载的客户端需要独立于响应时间继续发送请求。如果客户端在发送下一个请求之前等待前一个请求完成,这种行为会在测试中人为地使队列比实际上要短,从而扭曲测量结果[23]。

Figure 1-5. When several backend calls are needed to serve a request, it takes just a single slow backend request to slow down the entire end-user request.

Approaches for Coping with Load

Now that we have discussed the parameters for describing load and metrics for measuring performance, we can start discussing scalability in earnest: how do we maintain good performance even when our load parameters increase by some amount?

现在我们已经讨论了描述负载的参数和测量性能的指标,我们可以认真讨论可扩展性了:即使我们的负载参数增加了一定量,我们如何保持良好的性能?

An architecture that is appropriate for one level of load is unlikely to cope with 10 times that load. If you are working on a fast-growing service, it is therefore likely that you will need to rethink your architecture on every order of magnitude load increase—or perhaps even more often than that.

如果一种架构适合某个负载水平,那么它很可能无法应对10倍于此的负载。如果你在开发一个快速增长的服务,那么在每一次负载增加一个数量级时,你很可能需要重新思考你的架构,甚至需要更频繁地重构。

People often talk of a dichotomy between scaling up ( vertical scaling , moving to a more powerful machine) and scaling out ( horizontal scaling , distributing the load across multiple smaller machines). Distributing load across multiple machines is also known as a shared-nothing architecture. A system that can run on a single machine is often simpler, but high-end machines can become very expensive, so very intensive workloads often can’t avoid scaling out. In reality, good architectures usually involve a pragmatic mixture of approaches: for example, using several fairly powerful machines can still be simpler and cheaper than a large number of small virtual machines.

人们经常谈论缩放的二分法(垂直缩放,移动到更强大的机器和水平缩放,将负载分布在多台较小的机器上)。在多台机器上分布负载也被称为“不共享任何东西”的架构。可以在单个机器上运行的系统通常更简单,但高端机器可能非常昂贵,因此非常密集的工作负载通常无法避免缩放。实际上,良好的架构通常涉及实用主义的方法混合:例如,使用几台相对强大的机器仍然可能比大量小虚拟机更简单,更便宜。

Some systems are elastic , meaning that they can automatically add computing resources when they detect a load increase, whereas other systems are scaled manually (a human analyzes the capacity and decides to add more machines to the system). An elastic system can be useful if load is highly unpredictable, but manually scaled systems are simpler and may have fewer operational surprises (see “Rebalancing Partitions” ).

一些系统是弹性的,意味着它们在检测到负载增加时可以自动添加计算资源,而其他系统则需要手动扩容(由人员分析容量并决定是否需要添加更多机器)。当负载高度不可预测时,弹性系统可能非常有用,但手动扩容的系统更简单,可能会有更少的操作意外(请参见“重新平衡分区”)。

While distributing stateless services across multiple machines is fairly straightforward, taking stateful data systems from a single node to a distributed setup can introduce a lot of additional complexity. For this reason, common wisdom until recently was to keep your database on a single node (scale up) until scaling cost or high-availability requirements forced you to make it distributed.

将无状态服务分发到多台机器上相对来说相当简单,但将有状态数据系统从单节点迁移至分布式环境则可能引入大量额外复杂性。因此,直到不久之前,通常被认为明智之举是将数据库保持在单一节点上(纵向扩展)直至扩展成本或高可用性需求迫使你将其变为分布式环境。

As the tools and abstractions for distributed systems get better, this common wisdom may change, at least for some kinds of applications. It is conceivable that distributed data systems will become the default in the future, even for use cases that don’t handle large volumes of data or traffic. Over the course of the rest of this book we will cover many kinds of distributed data systems, and discuss how they fare not just in terms of scalability, but also ease of use and maintainability.

随着分布式系统的工具和抽象层的不断完善,这些普遍的认识可能会改变,至少对某些应用而言是如此。未来,分布式数据系统有可能成为默认选择,即使是用于不处理大量数据或流量的案例。在本书的其余部分,我们将涵盖许多种分布式数据系统,并讨论它们在可扩展性、易用性和可维护性方面的表现。

The architecture of systems that operate at large scale is usually highly specific to the application—there is no such thing as a generic, one-size-fits-all scalable architecture (informally known as magic scaling sauce ). The problem may be the volume of reads, the volume of writes, the volume of data to store, the complexity of the data, the response time requirements, the access patterns, or (usually) some mixture of all of these plus many more issues.

大规模操作系统的架构通常高度特定于应用程序——没有通用的、一刀切的可扩展架构(俗称神奇扩展酱)。问题可能是读取量、写入量、存储数据量、数据复杂性、响应时间要求、访问模式,或(通常)所有这些问题的混合,加上许多其他问题。

For example, a system that is designed to handle 100,000 requests per second, each 1 kB in size, looks very different from a system that is designed for 3 requests per minute, each 2 GB in size—even though the two systems have the same data throughput.

例如,一个被设计为每秒处理100,000个1kB请求的系统,看起来与一个被设计为每分钟处理3个2GB请求的系统非常不同,即使这两个系统具有相同的数据吞吐量。

An architecture that scales well for a particular application is built around assumptions of which operations will be common and which will be rare—the load parameters. If those assumptions turn out to be wrong, the engineering effort for scaling is at best wasted, and at worst counterproductive. In an early-stage startup or an unproven product it’s usually more important to be able to iterate quickly on product features than it is to scale to some hypothetical future load.

一种可适用于特定应用的可扩展架构是基于哪些操作是常见的和哪些是罕见的假设——负载参数来构建的。如果这些假设被证明是错误的,那么扩展的工程努力将至少是浪费的,而在最坏的情况下可能是适得其反的。在初创阶段的创业公司或未经验证产品中,能够快速迭代产品特性通常比将来某个假设负载的扩展更加重要。

Even though they are specific to a particular application, scalable architectures are nevertheless usually built from general-purpose building blocks, arranged in familiar patterns. In this book we discuss those building blocks and patterns.

尽管可扩展架构是针对特定应用而设计的,但通常是由常见的通用构建块组成的,排列成熟悉的模式。在本书中,我们将讨论这些构建块和模式。

Maintainability

It is well known that the majority of the cost of software is not in its initial development, but in its ongoing maintenance—fixing bugs, keeping its systems operational, investigating failures, adapting it to new platforms, modifying it for new use cases, repaying technical debt, and adding new features.

众所周知,软件大部分的成本并不在其初期的开发,而是在其持续的维护工作中——修复程序错误,保持系统运行,调查故障,适应新平台,修改新的用例,偿还技术债务以及添加新功能。

Yet, unfortunately, many people working on software systems dislike maintenance of so-called legacy systems—perhaps it involves fixing other people’s mistakes, or working with platforms that are now outdated, or systems that were forced to do things they were never intended for. Every legacy system is unpleasant in its own way, and so it is difficult to give general recommendations for dealing with them.

然而,不幸的是,许多从事软件系统工作的人不喜欢所谓的遗留系统维护——或许这涉及修复他人的错误,或者使用已经过时的平台,或者系统被迫执行其从未打算过的功能。每个遗留系统都有其自己的不愉快之处,因此很难对处理它们给出一般性建议。

However, we can and should design software in such a way that it will hopefully minimize pain during maintenance, and thus avoid creating legacy software ourselves. To this end, we will pay particular attention to three design principles for software systems:

然而,我们可以并且应该设计软件,使其在维护过程中尽可能减少痛苦,从而避免自己创建遗留软件。 为此,我们将特别注意软件系统的三个设计原则:

- Operability

-

Make it easy for operations teams to keep the system running smoothly.

让运维团队更轻松地保持系统运行流畅。

- Simplicity

-

Make it easy for new engineers to understand the system, by removing as much complexity as possible from the system. (Note this is not the same as simplicity of the user interface.)

让新工程师更容易理解系统,尽量从系统中消除复杂性。(注意这与用户界面的简单性不同。)

- Evolvability

-

Make it easy for engineers to make changes to the system in the future, adapting it for unanticipated use cases as requirements change. Also known as extensibility , modifiability , or plasticity .

为工程师未来轻松修改系统、并根据变化的需求适应未预期的用例提供便捷操作。这也称为可扩展性、可修改性或可塑性。

As previously with reliability and scalability, there are no easy solutions for achieving these goals. Rather, we will try to think about systems with operability, simplicity, and evolvability in mind.

以前可靠性和可扩展性已经没有简单的解决方案来实现这些目标。相反,我们会尝试考虑在可操作性、简洁性和可变性方面的系统思路。

Operability: Making Life Easy for Operations

It has been suggested that “good operations can often work around the limitations of bad (or incomplete) software, but good software cannot run reliably with bad operations” [ 12 ]. While some aspects of operations can and should be automated, it is still up to humans to set up that automation in the first place and to make sure it’s working correctly.

有人建议“好的操作通常可以克服不好(或不完整)的软件限制,但是好的软件不能可靠地运行不良操作”[12]。虽然某些操作方面可以和应该被自动化,但最终还是需要人类来进行第一次自动化设置,并确保其正确地运行。

Operations teams are vital to keeping a software system running smoothly. A good operations team typically is responsible for the following, and more [ 29 ]:

运维团队对于保持软件系统平稳运行非常重要。一个优秀的运维团队通常负责以下工作,以及更多其他事项 [29]:

-

Monitoring the health of the system and quickly restoring service if it goes into a bad state

监控系统的健康状况,如果系统状态不佳,迅速恢复服务。

-

Tracking down the cause of problems, such as system failures or degraded performance

追踪问题的原因,例如系统故障或性能降低。

-

Keeping software and platforms up to date, including security patches

保持软件和平台更新,包括安全补丁。

-

Keeping tabs on how different systems affect each other, so that a problematic change can be avoided before it causes damage

监控不同系统之间的相互影响,以避免出现可能导致破坏的问题性变化。

-

Anticipating future problems and solving them before they occur (e.g., capacity planning)

预料未来的问题并在它们发生之前解决它们(例如,容量规划)。

-

Establishing good practices and tools for deployment, configuration management, and more

建立好的实践和工具,用于部署、配置管理等方面。

-

Performing complex maintenance tasks, such as moving an application from one platform to another

执行复杂的维护任务,例如将一个应用程序从一个平台移动到另一个平台。

-

Maintaining the security of the system as configuration changes are made

随着配置变化的发生,维护系统的安全性。

-

Defining processes that make operations predictable and help keep the production environment stable

定义使操作可预测并帮助保持生产环境稳定的流程。

-

Preserving the organization’s knowledge about the system, even as individual people come and go

保留组织对系统的知识,即使个别人员进出。

Good operability means making routine tasks easy, allowing the operations team to focus their efforts on high-value activities. Data systems can do various things to make routine tasks easy, including:

良好的可操作性意味着使日常任务变得更加容易,使运营团队可以将精力集中在高价值的活动上。数据系统可以采取各种方式来简化日常任务,其中包括:

-

Providing visibility into the runtime behavior and internals of the system, with good monitoring

提供对系统运行行为和内部情况的可见性,带有良好的监控。

-

Providing good support for automation and integration with standard tools

为自动化提供良好支持并与标准工具进行集成。

-

Avoiding dependency on individual machines (allowing machines to be taken down for maintenance while the system as a whole continues running uninterrupted)

避免依赖特定的机器(使机器可以在维护期间关闭,而整个系统仍能无间断地运行)。

-

Providing good documentation and an easy-to-understand operational model (“If I do X, Y will happen”)

提供良好的文档和易于理解的操作模型(“如果我做 X,就会发生 Y”)。

-

Providing good default behavior, but also giving administrators the freedom to override defaults when needed

提供良好的默认行为,但也给管理员在需要时覆盖默认值的自由。

-

Self-healing where appropriate, but also giving administrators manual control over the system state when needed

在必要时进行自愈,但也在需要时赋予管理员手动控制系统状态的能力。

-

Exhibiting predictable behavior, minimizing surprises

展示可预测的行为,最小化惊喜。

Simplicity: Managing Complexity

Small software projects can have delightfully simple and expressive code, but as projects get larger, they often become very complex and difficult to understand. This complexity slows down everyone who needs to work on the system, further increasing the cost of maintenance. A software project mired in complexity is sometimes described as a big ball of mud [ 30 ].

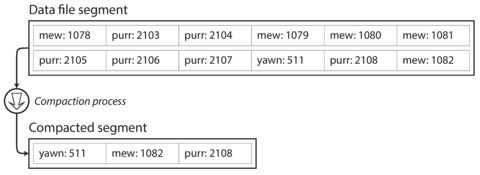

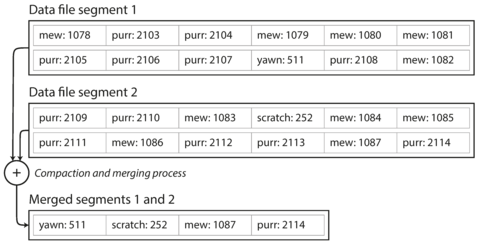

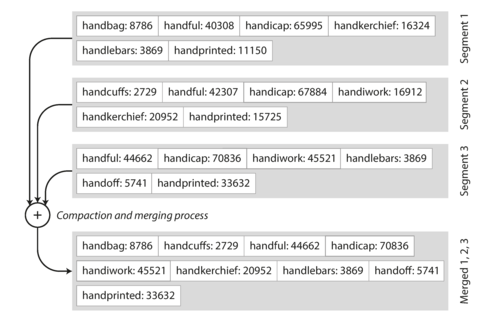

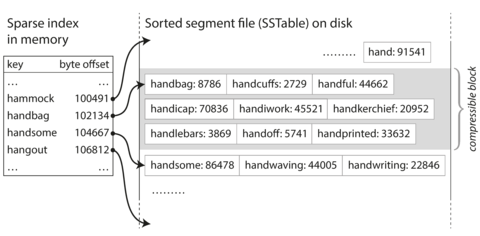

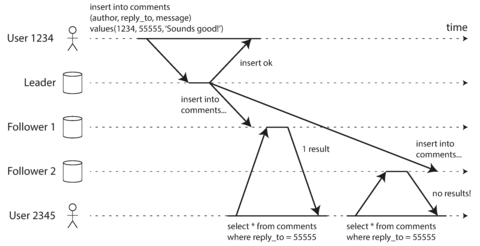

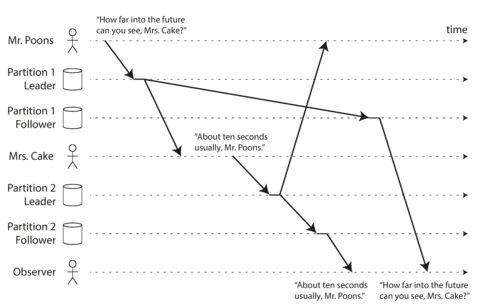

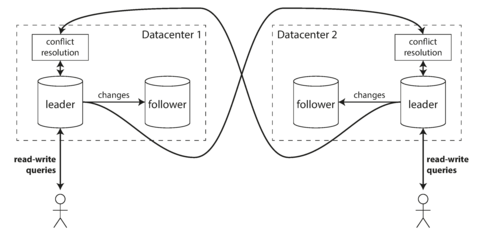

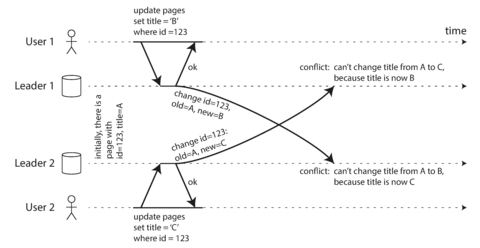

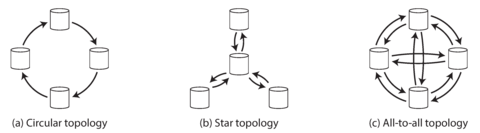

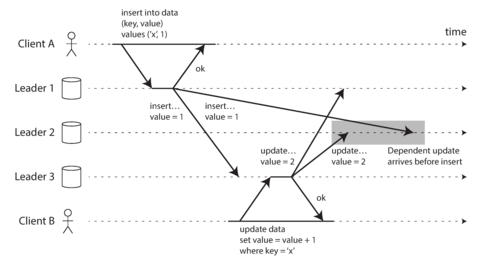

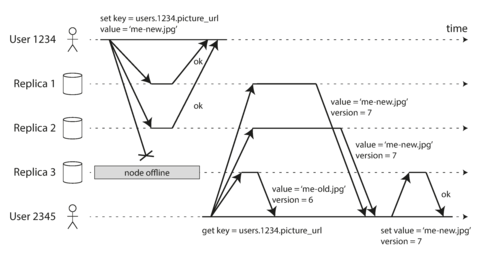

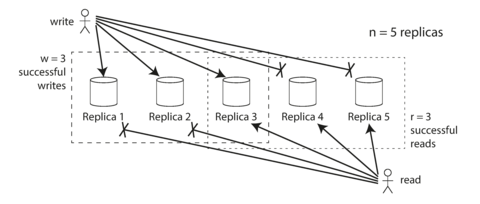

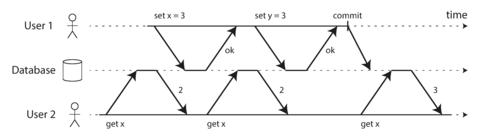

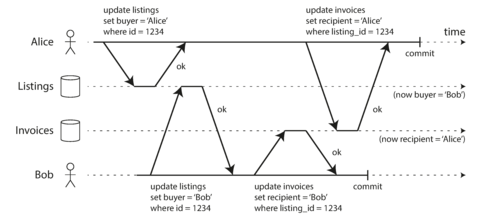

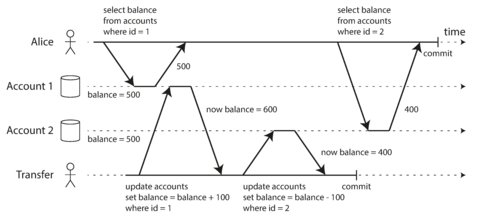

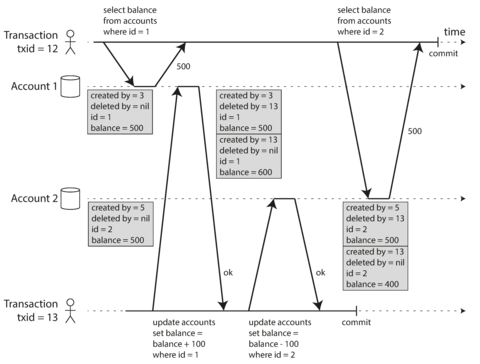

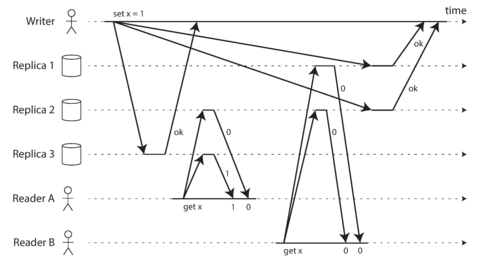

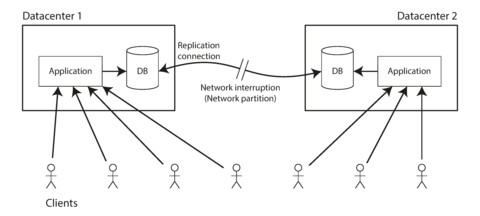

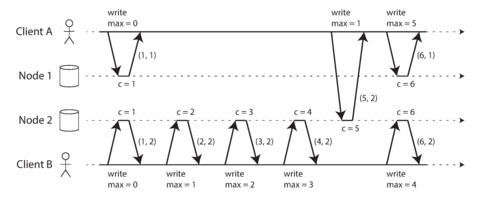

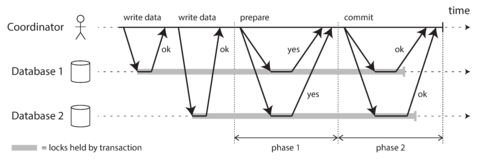

小型软件项目可以拥有简单明了且表达清晰的代码,但随着项目规模的扩大,往往会变得非常复杂且难以理解。这种复杂性会拖慢每一个需要处理该系统的人,进一步增加了维护成本。一个陷入复杂困境的软件项目有时被描述为一个沉重的泥球。[30]